Fluidic-Precision Problems That Ruin Repeatable Dosing

Author

Date Published

Reading Time

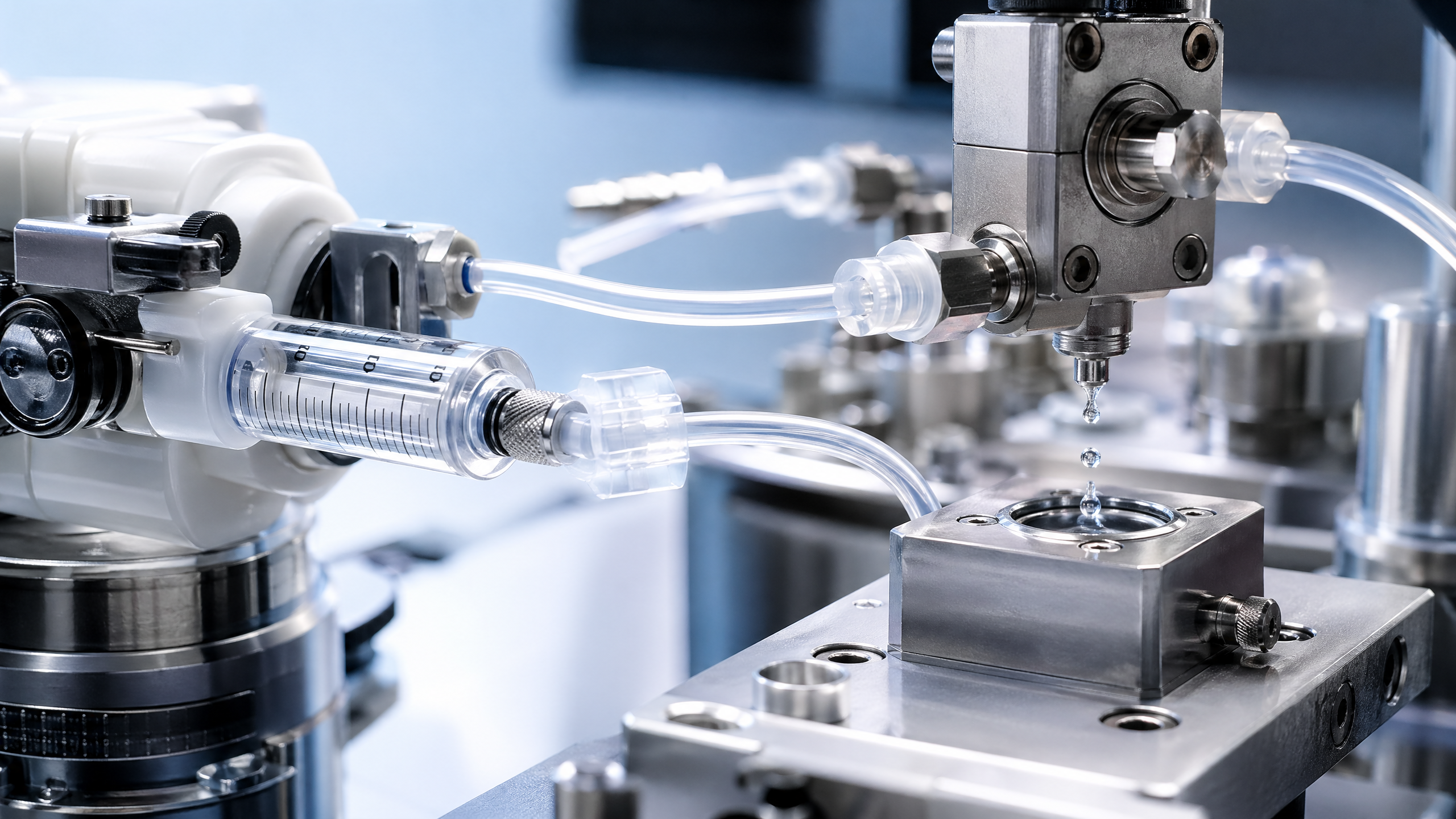

Repeatable dosing fails when Fluidic-Precision is compromised by hidden variables across the R&D-to-Production Transition. For Lab-Scale Production leaders in Bioprocess Engineering, even minor drift in Sub-Microliter Precision Dispensers, Automated Liquid Handling Systems, or calibration against ISO Standards can trigger costly inconsistencies. This article examines the fluidic-precision problems that undermine repeatability and what decision-makers must benchmark before scaling.

For information researchers, commercial evaluators, quality managers, and project leaders, repeatable dosing is not a narrow equipment issue. It affects batch release confidence, process transfer speed, validation effort, operator workload, and procurement risk. In regulated lab-scale and pilot environments, a deviation of even 0.5% to 2.0% in delivered volume can distort concentration profiles, cell response, reaction kinetics, or downstream separation behavior.

The challenge is that dosing inconsistency rarely comes from one obvious defect. It is usually created by a chain of small fluidic-precision problems: dead volume, pulsation, tubing fatigue, thermal drift, viscosity mismatch, software timing gaps, or weak calibration discipline. When these variables accumulate across development, tech transfer, and production readiness, repeatability collapses long before the issue becomes visible in final quality data.

Where Repeatable Dosing Breaks Down in Real Lab-to-Production Workflows

In most B2B laboratory and pilot-scale settings, dosing repeatability fails at interface points rather than at isolated components. A dispenser may perform within specification during factory acceptance testing, yet deliver unstable results once connected to a reactor feed line, a microfluidic chip, or a liquid handling deck. The practical problem begins when system-level fluidic behavior differs from bench validation conditions.

Common breakdown points include startup priming, low-volume aspiration, line switching, and long-duration dosing runs beyond 2 to 4 hours. At sub-microliter to low-milliliter scales, trapped air, seal wear, and pressure fluctuation have a magnified effect. A 50 µL error in a large transfer may be negligible, but the same deviation in a 500 µL or 5 µL application can materially alter assay performance or formulation balance.

Bioprocess engineering teams often encounter repeatability loss during three transition stages: method development, pilot verification, and scale-up handoff. During method development, the dosing profile may be optimized around one fluid class. During pilot verification, the process starts handling broader viscosity ranges or temperature shifts. At scale-up handoff, operators, tubing lengths, and environmental controls change, creating new variability that was never stress-tested.

Typical hidden variables that distort delivered volume

- Viscosity variation across buffers, solvents, cell media, or suspensions, often changing flow response by 5% to 15% if compensation is not configured.

- Dead volume in fittings, manifolds, valves, and tubing, especially when frequent switching creates carryover or lag.

- Air bubble formation during aspiration or recirculation, which can introduce intermittent under-delivery over 10 to 100 cycles.

- Temperature drift of 3°C to 8°C, changing fluid density, evaporation rate, and pump mechanics in open or semi-open systems.

- Control latency between software instruction and mechanical actuation, particularly in synchronized multi-channel dispensing.

Another frequent failure mode is assuming that repeatability equals average accuracy. In practice, a system may hit the target mean over 30 runs while still showing unacceptable cycle-to-cycle variation. For quality and safety managers, this matters because process capability depends on both bias and spread. If the coefficient of variation rises beyond internal thresholds, downstream control plans become more expensive and less reliable.

Why the same hardware performs differently across sites

Two sites using similar automated pipetting or precision dosing hardware can still report different outcomes due to setup discipline. Tube routing, maintenance intervals, fluid conditioning, operator training, and environmental stability all affect repeatable dosing. Site A may recalibrate every 30 days and validate at three volume points, while Site B may stretch maintenance to 90 days and test only at nominal volume. The hardware did not change, but dosing confidence did.

For procurement and project stakeholders, this means a capital purchase decision should not focus only on headline accuracy claims. Repeatability must be evaluated as a system property under real process conditions, not just as a catalog specification.

The Main Fluidic-Precision Failure Modes Decision-Makers Should Benchmark

A practical benchmark framework starts with failure modes that directly affect delivered dose. These are the variables most likely to create inconsistency during R&D-to-production transition. Instead of treating all fluidic-precision problems as generic drift, decision-makers should separate them into mechanical, fluid-property, control-system, and maintenance-related categories.

The table below outlines the failure modes most relevant to lab directors, procurement officers, and quality teams when comparing precision liquid handling systems, dispensers, and connected reactor or microfluidic platforms.

The key takeaway is that benchmarking must go beyond nominal accuracy. A supplier should be able to discuss repeatability across volume ranges, fluid classes, and run duration. If a platform only provides ideal-condition data, commercial evaluators should treat that as incomplete evidence, especially for processes that involve media, suspensions, or multi-step reagent dosing.

Critical benchmark questions before scale-up

- What is the repeatability range at minimum, nominal, and maximum target dose volumes?

- How does the system perform over 50, 100, or 500 consecutive cycles?

- What compensation methods are available for viscosity, temperature, and pressure variation?

- Which wetted materials are used, and how do they behave with solvents, biologics, or corrosive chemistries?

- How often must calibration, seal replacement, or tubing inspection be performed to maintain performance?

For cross-functional teams, this benchmarking approach reduces the risk of buying equipment that looks precise on paper but becomes unstable under actual production-adjacent operating conditions.

Why Calibration, Standards Alignment, and Software Control Matter More Than Many Teams Expect

Calibration is often treated as an annual or quarterly compliance event, but repeatable dosing requires a much tighter discipline. In many lab-scale production environments, a fixed calendar approach is not enough. Recalibration intervals should also be triggered by fluid change, maintenance event, major software update, or shift in target volume range. A system calibrated for aqueous buffer may not maintain equivalent precision with a viscous formulation or solvent-rich feed.

Alignment with ISO, USP, and GMP-oriented practices is especially important when dosing data will influence process validation or transfer packages. Even if a lab instrument is not itself a regulated production asset, the evidence it generates may drive decisions on acceptable feed rate, reagent ratio, or residence time. Weak calibration records therefore create both technical and documentary risk.

Software is another underestimated source of fluidic-precision problems. Timing errors measured in milliseconds can matter when channels are synchronized, when pulse timing affects droplet formation, or when microfluidic events occur in narrow windows. If firmware, scheduler logic, or sensor polling rate is poorly matched to mechanical actuation, the result is a repeatable command but a non-repeatable dose.

What a stronger control and calibration regime should include

A robust program typically validates at 3 volume points, 2 fluid classes, and at least 1 stress condition such as extended run time or elevated viscosity. Many organizations also set internal warning and action limits. For example, a warning threshold may be triggered when repeatability drifts above a defined coefficient of variation, while an action threshold stops use pending maintenance or recalibration.

The practical conclusion is simple: repeatable dosing is sustained by control discipline, not just precision hardware. Procurement teams should therefore evaluate serviceability, logging depth, and calibration workflows alongside mechanical specifications.

A frequent misconception in system qualification

Many teams validate a dispenser at one target volume, under one fluid condition, on one day. That proves the instrument can perform, but not that the process will remain stable across a 6-week development campaign or during a pilot run with multiple operators. Real qualification should reflect operating reality, not just acceptance protocol convenience.

How to Evaluate Precision Dosing Systems for Procurement and Project Execution

For B2B buyers, precision dosing equipment selection should be treated as a lifecycle decision. The right platform is not always the one with the highest advertised resolution. It is the one that maintains repeatable dosing across real fluids, realistic maintenance intervals, and expected project timelines. This is especially important when the equipment supports sensitive reactors, cell culture workflows, microfluidic development, or automated formulation screening.

A structured evaluation model helps procurement officers and project leaders compare options without over-weighting purchase price. In many cases, a lower upfront cost is offset by higher recalibration frequency, shorter consumable life, or reduced compatibility with future process transfer requirements.

The following comparison framework is useful when screening automated pipetting systems, sub-microliter dispensers, and integrated fluidic modules for lab-scale production or pilot use.

This evaluation method also clarifies cross-functional ownership. Engineering may prioritize integration and flow stability, QA may focus on traceability and verification, while procurement may compare service commitments and spare parts continuity over 12 to 36 months. A decision framework that captures all three views prevents late-stage surprises.

A practical 5-step procurement review

- Define target dose range, fluid classes, cycle frequency, and run duration.

- Request benchmark data under conditions close to your process, not generic demo data.

- Review calibration workflow, maintenance burden, and spare-part lead time.

- Assess software traceability, integration readiness, and update control.

- Run a site-specific acceptance plan before full deployment or scale transfer.

For organizations managing high-value R&D-to-production transitions, this approach supports more defensible capital decisions and lowers the probability of hidden fluidic-precision failures surfacing during qualification or pilot campaigns.

Implementation Priorities, Common Mistakes, and FAQ for Scaling Repeatable Dosing

Once a system is selected, implementation quality determines whether repeatable dosing is sustained. The first 30 to 60 days are critical. This is when line routing, operator habits, cleaning cycles, calibration baseline, and software permissions are established. If these controls are weak at startup, even a technically strong dosing platform can produce unstable results that become normalized as “routine variation.”

Three implementation priorities stand out. First, verify the installed configuration against the validated configuration, including tubing length, fittings, and environmental conditions. Second, qualify performance using the actual fluid set, not surrogate liquids alone. Third, create a response plan for drift events, including warning thresholds, recheck intervals, and maintenance escalation paths.

A common mistake is over-automating before the fluidic behavior is fully understood. Teams sometimes assume that adding automation will eliminate operator variability, but automation can also lock in hidden errors at higher throughput. Another mistake is focusing only on average delivered volume while ignoring edge cases such as first-shot performance, end-of-run drift, or behavior after cleaning and restart.

Common errors that ruin repeatability after installation

- Using one calibration setting across fluids with clearly different viscosities or densities.

- Extending tubing replacement beyond recommended life to reduce short-term cost.

- Skipping post-maintenance verification on the assumption that service restores original performance automatically.

- Ignoring environmental fluctuations such as temperature swings between 20°C and 28°C in open lab areas.

- Failing to document software updates that may alter timing, channel mapping, or synchronization behavior.

How do you know whether a dosing system is ready for scale transfer?

A useful readiness test includes at least 3 conditions: nominal fluid, worst-case fluid, and extended-run verification. Teams should also confirm repeatability over a meaningful cycle count, not just 5 or 10 test shots. If the process is sensitive, check both startup and steady-state performance because many systems behave differently in the first few cycles.

What procurement metric is most often underestimated?

Maintenance burden is commonly underestimated. A system with excellent nominal precision may still create operational friction if seals require frequent replacement, if tubing changeovers take 45 minutes instead of 10, or if spare parts lead times exceed 2 to 3 weeks. These factors directly affect uptime and project scheduling.

How often should repeatability be reverified?

There is no universal rule, but many organizations use a combination of time-based and event-based checks. A typical practice is a formal verification every 30 to 90 days, plus additional checks after maintenance, software update, fluid change, or unexplained quality drift. Higher-risk applications may require weekly quick checks using defined reference conditions.

Fluidic-precision problems that ruin repeatable dosing are rarely random. They arise from measurable interactions among hardware, fluids, software, maintenance, and operating discipline. For lab directors, quality leaders, and project decision-makers, the strongest strategy is to benchmark real process conditions, validate across multiple stress points, and select systems that remain stable through the full R&D-to-production transition.

G-LSP supports this decision process by focusing on technical benchmarking, fluidic consistency, and the practical requirements of sensitive scale-up environments across pharmaceuticals, chemicals, microfluidics, and automated liquid handling workflows. If your team is evaluating precision dosing platforms, scaling a transfer package, or tightening repeatability controls, now is the right time to get a tailored benchmark view. Contact us to discuss your application, compare solution paths, and explore a more reliable route to repeatable dosing at scale.

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

Core Sector // 01

Security & Safety