How Decentralizing Labs Changes Equipment Buying Priorities

Author

Date Published

Reading Time

As R&D and pilot production move closer to regional markets, the impact of decentralizing labs on equipment buying priorities is becoming impossible for enterprise leaders to ignore. From fluidic precision and compliance readiness to scalability across distributed sites, procurement decisions now demand far more than price comparison. This shift is redefining how pharmaceutical and chemical organizations evaluate lab infrastructure for speed, consistency, and long-term operational resilience.

Why is the impact of decentralizing labs on equipment getting so much attention now?

The short answer is that decentralized lab networks change the operating model behind scientific work. Instead of concentrating analytical development, pilot production, process validation, and formulation support in one flagship facility, many enterprises now spread these functions across regional sites. That may improve market responsiveness, support localized compliance, and reduce supply risk, but it also makes equipment selection far more strategic.

For decision-makers, the impact of decentralizing labs on equipment is not limited to buying more units in more places. It means every instrument, reactor, centrifuge, bioreactor, microfluidic platform, or liquid handling system must perform reliably in a distributed environment where staffing levels, facility conditions, and regulatory expectations may vary. Equipment that worked well in a centralized expert-led lab may become difficult to standardize across multiple sites with different operators and service ecosystems.

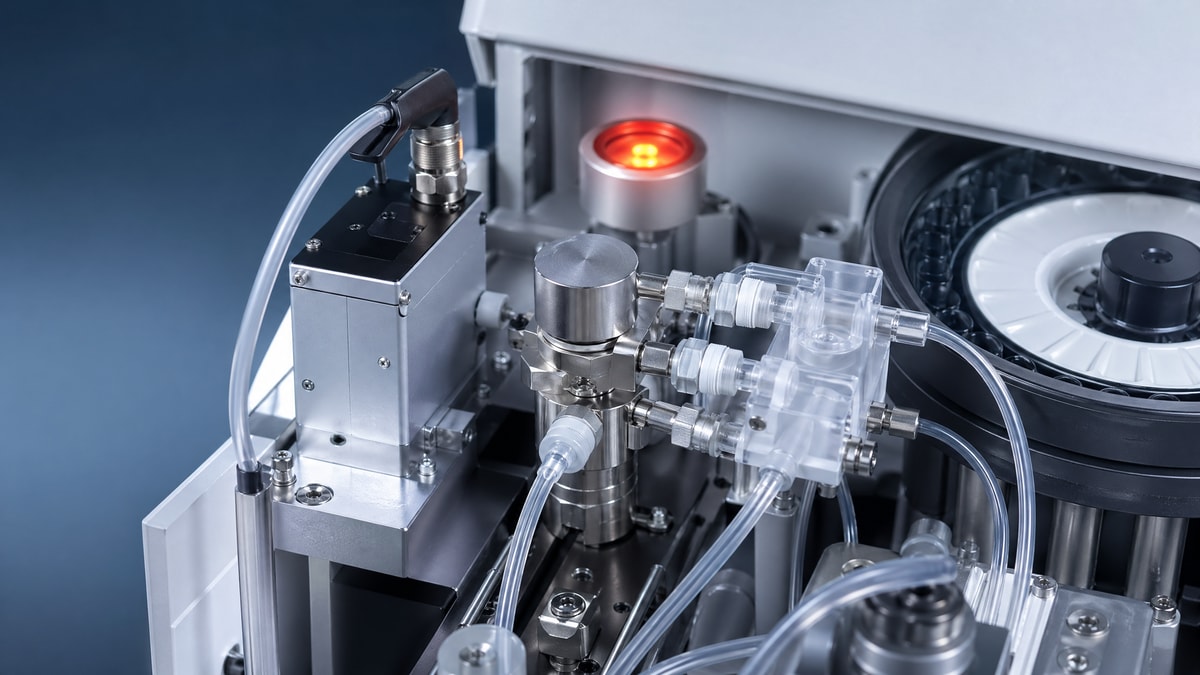

In pharmaceutical and chemical settings, this matters even more because process consistency is inseparable from product quality. A decentralized strategy can accelerate regional innovation, but it can also magnify variability if equipment architecture, calibration logic, software compatibility, and maintenance protocols are not aligned. That is why more organizations are rethinking buying priorities around reproducibility, digital traceability, modularity, and fluidic precision rather than focusing only on throughput or upfront capital cost.

Which companies feel the impact of decentralizing labs on equipment most strongly?

The strongest effects are usually seen in large pharmaceutical groups, specialty chemical manufacturers, CDMOs, biologics developers, and multinational innovation teams that need to move knowledge across regions. These organizations often run parallel workflows: early-stage R&D in one location, pilot-scale synthesis in another, and market-specific quality or application testing in yet another. In this model, equipment becomes a bridge between sites rather than a standalone lab asset.

Lab directors care because decentralized labs increase the burden of method transfer and operational consistency. Procurement leaders care because fragmented equipment portfolios increase lifecycle costs, training complexity, and spare-parts exposure. Bioprocess engineers and technical managers care because subtle differences in fluid handling, shear profile, thermal control, mixing behavior, and sensor integration can alter process outcomes.

The impact of decentralizing labs on equipment is especially visible in applications involving:

- pilot-scale reactor systems that must mirror larger production behavior,

- microfluidic devices requiring highly repeatable channel performance,

- single-use and stainless bioreactor platforms used across development stages,

- centrifugation and separation equipment tied to strict sample integrity standards,

- automated pipetting systems where sub-microliter precision determines data quality.

In all these categories, distributed operations expose weaknesses in equipment that lacks standard operating logic, validated data pathways, or scalable configuration options.

What changes first in procurement priorities when labs become decentralized?

The first shift is from isolated purchasing to system-level purchasing. In a centralized model, a buyer might optimize for the best performance in one location. In a decentralized model, the smarter question is whether the same equipment family can be deployed, supported, and validated across several locations without creating process drift or hidden support costs.

This is where the impact of decentralizing labs on equipment becomes highly practical. Buyers increasingly prioritize five factors:

- Standardization potential: Can one platform support multiple sites with minimal configuration conflict?

- Compliance readiness: Does the equipment align with GMP, ISO, USP, data integrity, and audit expectations?

- Operational simplicity: Can teams with different experience levels use it consistently?

- Service continuity: Are spare parts, calibration, training, and technical support available regionally?

- Scalability: Can the platform support growth from benchtop study to pilot transfer and commercial preparation?

In many cases, organizations also become less tolerant of “hero equipment” that performs well only under expert supervision. Distributed operations favor robust, repeatable, digitally connected systems over highly customized tools that depend on one specialist or one local workaround.

How should enterprise buyers compare equipment options across distributed lab sites?

A useful approach is to compare options not just by technical specification, but by deployment fitness. The impact of decentralizing labs on equipment becomes clearer when evaluation teams add site-to-site usability, validation burden, and data continuity into the scoring model. An instrument that appears cost-effective on paper may become expensive if each site needs custom training, local software adaptation, or different maintenance schedules.

The table below summarizes a practical FAQ-style comparison framework for distributed procurement.

For many enterprise teams, this broader evaluation model leads to different purchasing outcomes. A platform with slightly higher initial cost may win because it lowers total validation effort, improves global comparability, and simplifies regional support.

What are the most common mistakes companies make when responding to the impact of decentralizing labs on equipment?

One frequent mistake is treating decentralization as a simple capacity expansion project. That approach often results in duplicated equipment purchases without a common operating framework. The business may gain more physical capacity, but lose analytical alignment, data consistency, and maintenance efficiency.

A second mistake is over-prioritizing unit price. In decentralized environments, low purchase cost can be offset by expensive onboarding, inconsistent consumables, fragmented software licensing, or repeated qualification work. Total cost of ownership becomes much more important than price per instrument.

A third mistake is underestimating workflow interdependence. For example, fluidic precision in automated liquid handling affects assay reproducibility; centrifuge performance influences sample quality; reactor control fidelity shapes scale-up confidence. If each regional site buys independently, cross-process variability can accumulate in ways that are difficult to trace.

Another common issue is buying equipment that is technically advanced but operationally fragile. The impact of decentralizing labs on equipment often favors designs that are intuitive, durable, and easy to qualify. Highly sophisticated platforms still have value, but only when their complexity is matched by training depth, service availability, and clear process need.

How do fluidic precision, compliance, and scalability become more important in decentralized labs?

These three dimensions move to the center because distributed lab networks depend on repeatable execution. Fluidic precision matters when enterprises need comparable results from multiple sites running the same protocols. Even small deviations in dispensing, channel flow, mixing, or separation can undermine method transfer and create false differences between locations.

Compliance readiness becomes more critical because decentralized operations create more inspection points, more data paths, and more handoffs between teams. Equipment that includes traceable documentation, controlled software behavior, and strong validation support helps enterprises maintain confidence as workflows move from research to pilot or pre-commercial stages.

Scalability matters because decentralized labs are rarely static. A site may begin with application testing, then add formulation work, stability studies, localized pilot runs, or rapid-response development programs. Equipment that can evolve through modular upgrades or adjacent platform compatibility provides better long-term resilience than systems optimized for only one narrow use case.

This is particularly relevant in environments where bench-scale insight must connect smoothly to larger process decisions. Organizations that benchmark equipment against recognized industrial and regulatory standards are better positioned to avoid performance gaps between local experimentation and broader manufacturing intent.

How can decision-makers build a smarter buying framework for decentralized lab infrastructure?

The most effective framework starts with business design, not catalog comparison. Leaders should first define why the lab network is being decentralized: speed to regional market, supply resilience, customer proximity, localized development, or risk diversification. Once that purpose is clear, equipment selection can be matched to workflow criticality rather than handled as a generic procurement exercise.

A strong buying framework usually includes the following steps:

- Map which processes must remain identical across all sites and which can be locally adapted.

- Identify critical equipment where performance variation would affect quality, compliance, or scale-up confidence.

- Create a cross-functional scorecard involving procurement, operations, quality, engineering, and end users.

- Require vendors to demonstrate service coverage, training support, spare-parts readiness, and documentation maturity.

- Pilot standardization on a smaller equipment set before scaling purchases globally.

Seen this way, the impact of decentralizing labs on equipment is not only a procurement challenge. It is a governance issue that affects scientific repeatability, regional execution speed, and enterprise resilience.

What should enterprise leaders ask before moving forward with suppliers or internal purchasing teams?

Before issuing a final purchase decision, leaders should ask a focused set of questions that reflect the realities of decentralized operations. Can the equipment deliver consistent outputs across sites with different operator profiles? Are calibration, software, and consumables controlled in a way that supports global comparability? Is the platform suitable for both current experiments and future pilot or regulated workflows? Can the vendor support preventive maintenance and urgent service in every required geography?

It is also wise to confirm what success will look like 12 to 24 months after deployment. Will the equipment reduce transfer friction between R&D and pilot production? Will it strengthen compliance posture? Will it shorten response time for regional product adaptation or customer-specific development? These questions help move the conversation beyond specification sheets and toward operational value.

For organizations navigating the impact of decentralizing labs on equipment, the best results usually come from aligning technical benchmarks, regulatory expectations, and lifecycle economics from the beginning. If you need to confirm a specific solution, parameter range, deployment roadmap, service model, budget logic, or cooperation approach, the most productive next step is to discuss site count, workflow criticality, precision requirements, compliance targets, validation burden, and expected scale-up path before comparing final options.

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

Core Sector // 01

Security & Safety