Why Personalized Medicine Needs Different Lab Gear Choices

Author

Date Published

Reading Time

Personalized medicine is reshaping how labs select equipment, prioritize scalability, and control precision across every workflow. As the future of personalized medicine lab gear comes into sharper focus, decision-makers must evaluate systems that support small-batch flexibility, regulatory consistency, and seamless transition from R&D to production. This shift makes lab gear choices a strategic factor in innovation speed and therapeutic reliability.

What the shift to personalized medicine really means for laboratories

Personalized medicine is not simply a new treatment philosophy. It changes how samples are processed, how therapies are formulated, how quality is documented, and how production equipment is selected. In conventional large-batch environments, laboratories often optimize around repeatability at scale for a stable product profile. In personalized therapeutics, however, variability is built into the workflow: smaller patient cohorts, shorter production windows, biomarker-driven decisions, and frequent transitions between development stages.

That is why the future of personalized medicine lab gear is closely tied to flexibility, fluidic precision, contamination control, and data traceability. Lab directors and process engineers must think beyond whether a unit can perform a task. They must assess whether the equipment can support patient-specific or indication-specific workflows without compromising regulatory readiness or process consistency.

For information researchers in pharmaceutical, biotech, and advanced chemical environments, this topic matters because lab gear now influences strategic outcomes. The right system can reduce transfer risk between benchtop proof-of-concept and pilot-scale execution. The wrong system can create hidden bottlenecks in dosing accuracy, sample integrity, cleaning validation, or throughput planning.

Why the industry is paying closer attention now

Several industry trends are driving stronger focus on the future of personalized medicine lab gear. First, cell and gene therapies, mRNA platforms, targeted biologics, and companion diagnostics all demand more exact material handling than many legacy systems were designed to provide. Second, the move from batch-heavy processes toward more continuous, modular, and digitally monitored operations is pushing labs to upgrade instrumentation that can maintain performance across changing volumes and formulations.

Third, procurement teams are no longer evaluating equipment only on upfront specifications. They also look at GMP alignment, ISO-referenced performance, ease of validation, single-use compatibility, software integration, and long-term scalability. In other words, equipment is now part of the process architecture, not just a support tool.

This is where a technical benchmarking perspective becomes essential. Organizations such as G-LSP address the gap between laboratory experimentation and industrial execution by comparing hardware through the lens of fluidic control, bio-consistency, and transition readiness. In personalized medicine settings, those factors often determine whether a process remains robust as it moves from discovery into regulated production support.

Core equipment traits shaping the future of personalized medicine lab gear

To understand why different gear choices are necessary, it helps to focus on the performance traits that matter most. Personalized medicine workflows typically require equipment that can operate accurately at smaller volumes, switch conditions rapidly, and preserve product integrity under sensitive biological or chemical constraints.

- High fluidic precision for sub-microliter to low-volume dosing

- Scalable architecture that supports smooth transfer from R&D to pilot production

- Closed or low-exposure handling to reduce contamination risk

- Strong data capture for traceability, audit support, and process reproducibility

- Material compatibility for biologics, viral vectors, sensitive reagents, and advanced solvents

- Fast changeover capability for multi-product or patient-specific production environments

These requirements explain why the future of personalized medicine lab gear is not centered on one single machine category. It depends on a coordinated ecosystem of reactors, microfluidics, bioreactors, centrifuges, and liquid handling platforms that work together with less process drift and better validation logic.

Industry overview: where equipment decisions carry the most weight

In practice, the impact of equipment choice varies by workflow. The table below outlines where the future of personalized medicine lab gear becomes especially important and what decision-makers typically need to evaluate.

How specific equipment categories are evolving

Pilot-scale reactors and synthesis systems

For precision therapeutics and specialized compounds, pilot-scale reactors must do more than increase volume. They need to preserve reaction fidelity, thermal control, and mixing behavior from early-stage development onward. Glass-lined and advanced stirred-tank systems remain valuable, but they are increasingly judged on modularity, sensor integration, and suitability for smaller, more variable campaigns.

Precision microfluidic devices

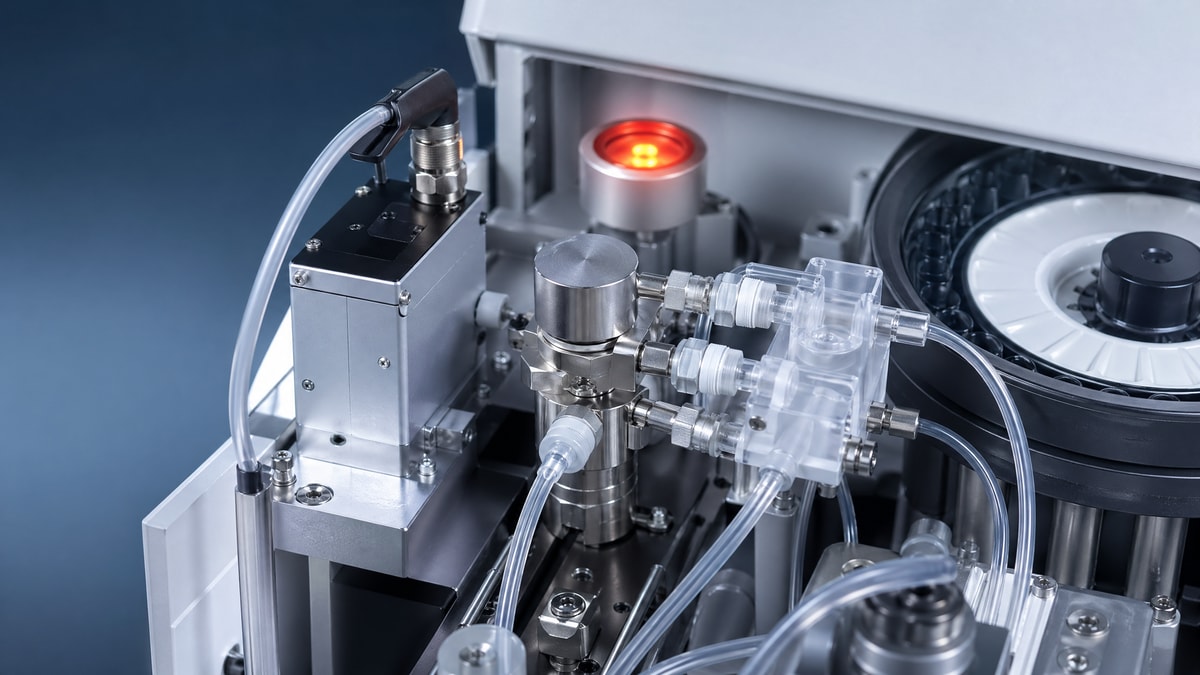

Microfluidics plays a major role in the future of personalized medicine lab gear because it supports exact reagent manipulation, controlled particle formation, and efficient screening with minimal input materials. This is especially relevant in nanoparticle formulation, biomarker assays, and small-batch therapeutic prototyping, where tiny deviations can alter downstream performance.

Bioreactors and cell culture infrastructure

Personalized biologics and autologous therapies place strong demands on culture control. Single-use bioreactors, low-shear mixing systems, and tightly monitored environmental controls help labs manage batch individuality while reducing cleaning validation burden. Equipment in this area must balance flexibility with biological consistency.

Centrifugation and separation technology

Separation steps often determine yield, purity, and sample viability. In personalized medicine, centrifuges and related separation tools must support fine-tuned protocols rather than broad standard settings. Multi-sensory monitoring, controlled acceleration profiles, and reproducible rotor performance all contribute to better sample integrity.

Automated pipetting and liquid handling

Automated liquid handling is one of the clearest examples of why different gear choices matter. Manual flexibility may help in early research, but personalized workflows quickly expose risks in accuracy, repeatability, and documentation. Systems capable of sub-microliter precision, smart calibration, and digital traceability are increasingly central to the future of personalized medicine lab gear.

Business value beyond the laboratory bench

The value of better gear selection is not limited to scientific performance. It also affects cost control, compliance, development speed, and organizational resilience. A lab equipped for personalized medicine can use expensive materials more efficiently, shorten iteration cycles, and reduce costly deviations during technology transfer. That is particularly important for global enterprises managing multiple sites, contract partners, and regulatory jurisdictions.

For procurement officers, this means evaluating total operational fit rather than isolated instrument specs. For bioprocess engineers, it means choosing systems that generate stable process knowledge. For lab directors, it means building an equipment environment that can absorb future therapeutic complexity without constant reinvestment in incompatible platforms.

Common use scenarios that require different lab gear choices

Practical evaluation points for decision-makers

When assessing the future of personalized medicine lab gear, companies should avoid focusing only on brand reputation or raw throughput. A more useful framework includes five questions. Can the system maintain precision at the actual working volume? Can it support regulatory documentation without excessive customization? Is it compatible with single-use or closed-process strategies? Can it scale or translate without changing core process behavior? And does it fit into a broader digital and operational architecture?

It is also wise to benchmark equipment against international expectations such as ISO, USP, and GMP-relevant standards where applicable. This does not just reduce compliance risk. It helps teams compare platforms using common performance language, which is essential when multiple departments influence final selection.

Another practical consideration is cross-functional alignment. Personalized medicine programs often involve R&D, manufacturing science, quality, and procurement from the beginning. Gear decisions made too early in isolation can lock in hidden inefficiencies later. Shared technical criteria create better long-term outcomes.

FAQ on the future of personalized medicine lab gear

Is personalized medicine always a low-volume equipment problem?

Not always. Low-volume precision is important, but the larger issue is controlled variability. Some workflows may expand in scale, yet they still require flexible, traceable, and formulation-sensitive equipment.

Why can’t conventional batch lab gear simply be reused?

In many cases it can be reused for early work, but conventional systems may struggle with changeover speed, data granularity, contamination control, or micro-volume accuracy. Personalized workflows expose those limitations more quickly.

Which equipment area should organizations review first?

Start with the step where product integrity or process translation is most vulnerable. For some labs that is liquid handling; for others it is bioreactor control, microfluidic formulation, or separation technology.

A practical path forward

The future of personalized medicine lab gear is ultimately about building laboratory infrastructure that matches the precision, responsiveness, and accountability of next-generation therapeutics. As personalized treatment models mature, equipment selection will increasingly define how quickly organizations can move from hypothesis to validated outcome.

For teams evaluating their next steps, the most effective approach is to map therapeutic goals against actual workflow demands, then benchmark hardware according to fluidic precision, bio-consistency, scalability, and regulatory fit. In that context, multidisciplinary intelligence platforms such as G-LSP offer meaningful value by connecting benchtop realities with industrial execution standards. That connection is what turns lab gear from an operational purchase into a strategic capability.

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

Core Sector // 01

Security & Safety