API Interoperability Metrics That Prevent Integration Rework

Author

Date Published

Reading Time

For technical evaluators, failed integrations rarely stem from missing features—they come from weak interoperability signals discovered too late. This article explores software api interoperability metrics that help assess compatibility, data consistency, protocol alignment, and long-term maintainability before deployment, reducing integration rework and supporting faster, lower-risk decisions across complex lab, bioprocess, and industrial technology environments.

Why scenario-specific evaluation matters more than generic API claims

In technical procurement and system selection, “API available” is often treated as a yes-or-no checkbox. That shortcut is risky. A software endpoint that works well in a standalone dashboard may fail under regulated batch traceability, real-time instrument orchestration, or cross-site data synchronization. For evaluators in pharmaceutical, chemical, and precision lab environments, software api interoperability metrics are not abstract IT measures; they are practical signals of whether an integration will remain stable when workflows become larger, faster, and more tightly validated.

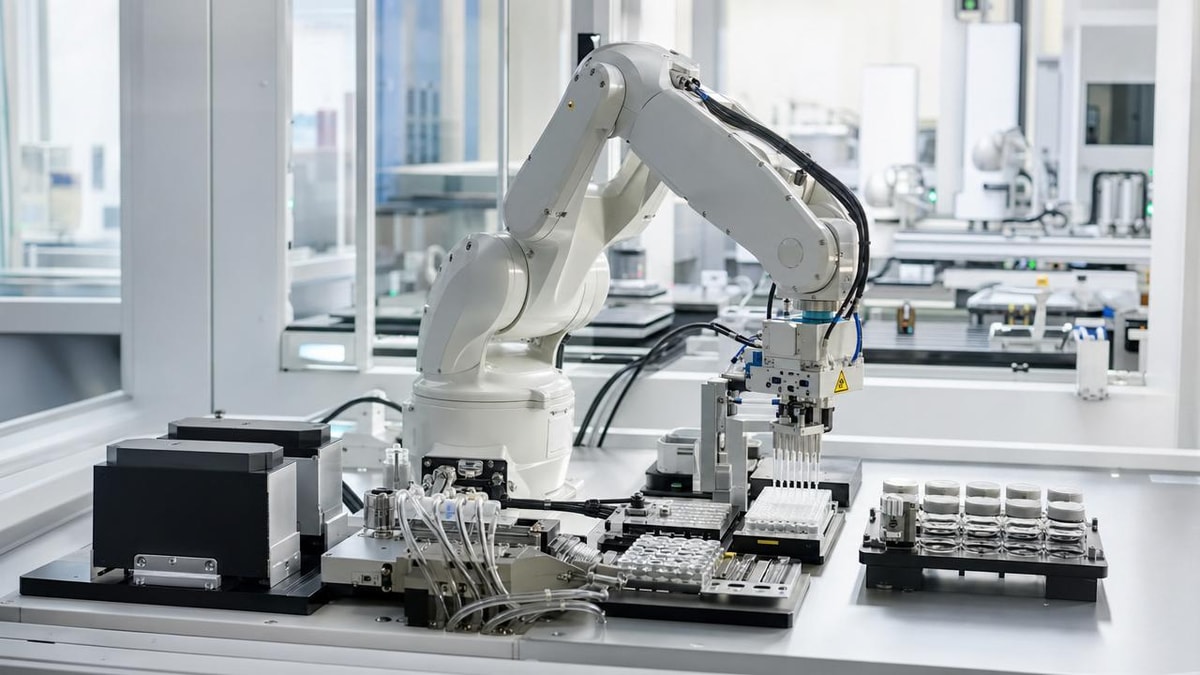

This matters especially in environments like G-LSP’s focus areas: pilot-scale reactors, microfluidic systems, bioreactors, centrifugation platforms, and automated liquid handling. These settings combine device control, process analytics, QA documentation, and ERP or LIMS connectivity. Each use case stresses interoperability in a different way. A procurement officer may care about vendor lock-in risk, a bioprocess engineer may focus on time-series integrity, and a lab director may prioritize deployment speed across mixed hardware generations. The right metrics depend on the scenario.

Where software api interoperability metrics are most critical

Technical evaluators usually encounter interoperability risk in five recurring business scenarios. Understanding which scenario applies first will improve how metrics are weighted and interpreted.

1. Instrument-to-software integration in advanced labs

Here the main concern is whether analytical devices, dispensers, reactors, or cell culture systems can reliably transmit commands, status, alarms, and result files into supervisory software. The best software api interoperability metrics in this scenario include command acknowledgment reliability, event latency, schema stability, and backward compatibility across firmware updates.

2. LIMS, MES, and ERP data handoff

This scenario is less about device control and more about data consistency. Evaluators should look at field mapping completeness, API version governance, error handling transparency, and transaction traceability. If an API cannot preserve batch identifiers, material genealogy, or audit timestamps, rework appears later as manual correction and compliance exposure.

3. Multi-vendor automation projects

When robotics, fluidic controllers, analytics platforms, and cloud tools come from different suppliers, interoperability is pressured by uneven documentation quality and conflicting payload assumptions. In this case, software api interoperability metrics should emphasize protocol conformity, documentation completeness, test environment quality, and the percentage of functions accessible without custom middleware.

4. Scale-up from benchtop to pilot or production support systems

Scale-up often reveals hidden interoperability weakness. APIs that work with low sample frequency may break when connected to higher-volume sensors, more users, and stricter review cycles. Throughput tolerance, queue resilience, timestamp precision, and retry logic become leading indicators.

5. Global deployment and long lifecycle programs

For enterprise programs across regions or business units, the issue is maintainability over time. Evaluators should measure deprecation notice periods, release predictability, localization support, identity federation compatibility, and long-term version coexistence. These software api interoperability metrics directly affect whether a platform can remain usable across years of operational change.

A practical comparison table for common evaluation scenarios

The table below helps technical evaluators align interoperability priorities with real operating contexts rather than relying on generic vendor demonstrations.

The core software api interoperability metrics to prioritize

Although every project is different, several metrics consistently separate low-risk integrations from expensive ones.

Protocol and standards alignment

Check whether the API uses recognized patterns and whether authentication, serialization, and error models align with enterprise norms. In regulated and technical environments, support for REST conventions, webhooks, OPC UA bridges, or standardized event models may be more important than raw feature count. A vendor that deviates heavily from expected practices increases onboarding effort and long-term maintenance burden.

Schema clarity and data fidelity

Data should retain meaning across systems. Evaluate field naming consistency, unit handling, timestamp resolution, null-value behavior, metadata preservation, and identifier persistence. In fluidic-precision and process environments, losing decimal precision or context tags can damage analytical comparability even when the API technically “works.”

Version stability and change governance

One of the most valuable software api interoperability metrics is the vendor’s change discipline. Ask how often breaking changes occur, whether previous versions remain supported, and how migration guidance is delivered. Stable version governance reduces hidden revalidation and redevelopment costs.

Observability and failure transparency

A highly interoperable API should make failures understandable. Strong signals include structured error codes, correlation IDs, webhook delivery logs, rate-limit visibility, and retry recommendations. Without this, integrators spend too much time guessing whether failures come from payload design, permissions, network timing, or source-system behavior.

Testability before deployment

Sandbox realism is often underestimated. Evaluators should examine whether the test environment mirrors production data shapes, supports edge cases, and allows performance simulation. Weak testability is a strong predictor of integration rework because teams validate against ideal conditions, not operational reality.

How priorities change by evaluator role

Different stakeholders use software api interoperability metrics for different decisions, so alignment is essential before scoring vendors.

Common scenario-based mistakes that trigger rework

The most expensive mistakes usually come from evaluating the wrong layer of interoperability.

- Assuming device data export equals full API interoperability. Export files may not support event-driven workflows or two-way control.

- Testing only normal conditions. Real environments include malformed payloads, delayed acknowledgments, partial records, and credential changes.

- Ignoring lifecycle metrics. An API that integrates quickly today may create major rework if version changes are poorly governed.

- Overvaluing feature breadth while neglecting documentation quality and support responsiveness.

- Not verifying unit semantics, timestamp time zones, and sample identifiers in regulated or high-precision workflows.

Scenario-fit recommendations for complex lab and industrial environments

In multidisciplinary environments such as pilot synthesis, microfluidic experimentation, cell culture infrastructure, centrifugation analytics, and automated liquid handling, interoperability should be evaluated as a workflow property, not a software property alone. A useful approach is to map every integration to three layers: command exchange, data integrity, and governance continuity.

For command-intensive systems, prioritize latency, acknowledgment reliability, and recovery behavior after interruption. For data-centric systems, focus on schema fidelity, traceability, and metadata preservation. For enterprise orchestration, emphasize version policy, identity compatibility, audit support, and vendor responsiveness. This scenario-based weighting gives technical evaluators a more realistic view than a single composite score.

When benchmarking suppliers, request evidence rather than promises: sample payloads, version history, error code libraries, sandbox access, release notes, and references from similarly complex deployments. These artifacts reveal whether software api interoperability metrics are operationally proven or only presented in marketing language.

FAQ: judging software api interoperability metrics before purchase

Which metric is the strongest predictor of future integration rework?

Usually it is not one metric alone, but the combination of schema stability, error transparency, and version governance. If these are weak, even feature-rich APIs become expensive to maintain.

Are software api interoperability metrics equally important for small deployments?

Yes, but the weighting changes. Smaller deployments may tolerate lower throughput, yet they still need clean mapping, understandable errors, and predictable updates to avoid hidden operational burden.

How can evaluators compare vendors fairly?

Use a scenario-based scorecard. Define the workflow, list the critical failure modes, assign weights to the most relevant software api interoperability metrics, and request proof in a controlled test case.

Final decision guidance

The best interoperability decision is rarely about choosing the API with the longest feature list. It is about selecting the option whose software api interoperability metrics fit the real operating scenario: precision instrumentation, regulated data exchange, multi-vendor automation, scale-up stress, or global lifecycle management. For technical evaluators, that means asking not only “Can it connect?” but also “Can it connect accurately, transparently, and sustainably under our conditions?”

If your organization is reviewing platforms across sensitive R&D-to-production transitions, start with a scenario map, define the interoperability risks that matter most, and require evidence-based validation before deployment. That approach reduces integration rework, shortens evaluation cycles, and supports better decisions across complex lab, bioprocess, and industrial technology ecosystems.

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

Core Sector // 01

Security & Safety