Payload vs Reach: Where Lab Robot Specs Mislead

Author

Date Published

Reading Time

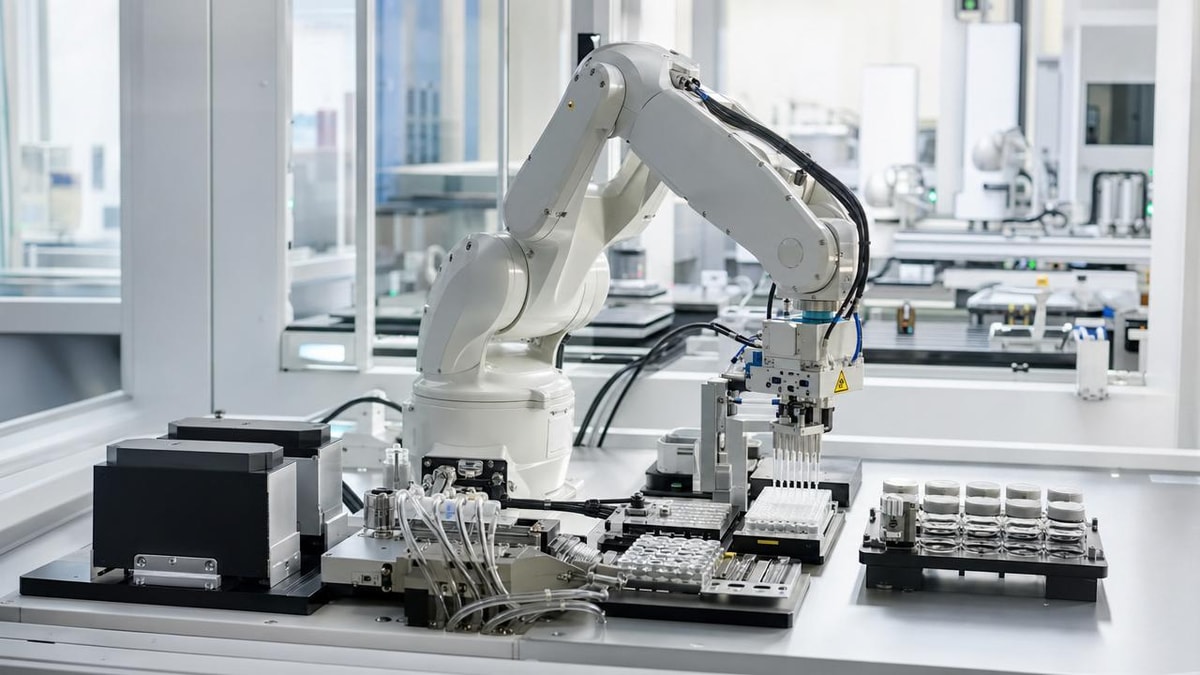

In lab automation, headline specs can distort real-world fit. For technical evaluators comparing robotic arm payload and reach benchmarks, bigger numbers do not always translate into safer motion, finer liquid-handling accuracy, or better integration within constrained workcells. This article examines where standard robot specifications mislead procurement and engineering teams, and how to assess true performance in precision-driven laboratory environments.

For pharmaceutical, chemical, and advanced life-science laboratories, the gap between brochure numbers and deployed performance often appears during integration, not during vendor selection. A robot advertised with a 10 kg payload and 1,300 mm reach may still underperform in pipetting, vial transfer, microplate handling, or centrifuge loading if its wrist inertia limit, repeatability under dynamic load, or motion envelope does not match the actual process.

This matters most in regulated and precision-dependent environments, where even a 1–2 mm path deviation, a small vibration event, or a delayed deceleration profile can affect aspiration depth, cap alignment, seal integrity, or collision margins. For technical evaluators, robust robotic arm payload and reach benchmarks must therefore extend beyond nominal specifications into application-specific proof.

Why Payload and Reach Alone Create False Confidence

Payload and reach are essential starting points, but they are incomplete descriptors of lab suitability. Payload is usually presented as a maximum mass at the wrist under defined conditions. Reach is usually the maximum distance from the robot base to a point in its work envelope. Neither figure alone reveals how the robot behaves when carrying eccentric grippers, liquid-filled consumables, or tooling that shifts the center of gravity during motion.

The difference between rated payload and usable payload

In laboratory automation, usable payload is often 20% to 40% lower than the headline value once end effectors, cable dress packs, sensor brackets, and safety clearances are included. A robot rated for 5 kg may have only 3–4 kg of practical capacity for a complex gripper plus a full SBS plate stack, barcode scanner, and anti-drip accessory. That gap widens if the tooling extends 100–250 mm from the flange, increasing moment load.

For liquid handling and microfluidic support tasks, the more relevant question is not whether a robot can lift a vessel, but whether it can move it with stable acceleration and repeatable orientation. A 2 kg load transported with sudden jerk can degrade dosing consistency more than a heavier load moved with smoother path planning.

Maximum reach is not the same as accessible reach

A reach figure measured in open space can be misleading in a real workcell with incubators, biosafety cabinets, balances, centrifuges, and enclosures. In practice, accessible reach may be reduced by 15% to 35% due to joint orientation limits, fixture interference, door swing, and required approach angles. A robot that can technically touch a rack may still be unable to enter with the correct pitch, yaw, or vertical clearance.

This is especially important in compact lab layouts where benchtop footprints are dense and workcell heights vary. Reaching into a centrifuge bucket, accessing the back row of a cold storage deck, or loading a reactor-side sampling tray often demands a usable 3D envelope, not a single radial number.

Three common specification traps

- Comparing payload without accounting for end-of-arm tooling mass and offset.

- Comparing reach without validating approach angle, wrist articulation, and overhead clearance.

- Assuming repeatability at low speed remains the same during high-cycle transport or multi-stop motion.

The table below highlights where standard robotic arm payload and reach benchmarks often diverge from laboratory reality.

The key conclusion is straightforward: payload and reach are screening data, not decision data. A technically sound benchmark should include load geometry, path dynamics, workcell constraints, and process sensitivity before a robot is considered fit for deployment.

How to Evaluate Real Performance in Precision Lab Workcells

For G-LSP-aligned evaluation workflows, the most effective approach is to test robot suitability against the actual architecture of micro-efficiency: fluidic precision, contamination control, compact integration, and repeatable operation between benchtop development and pilot-scale execution. That means translating robotic arm payload and reach benchmarks into process benchmarks.

Start with the true task load, not the nominal object weight

A common mistake is to calculate only the weight of the item being moved. In real cells, total moving mass often includes gripper body, fingers, vacuum manifold, tubing, cable support, sensor mounts, and occasionally disposable adapters. For a plate-handling or sample-transfer routine, the tooling package can represent 30% to 60% of the total wrist burden.

Evaluators should document at least 4 load conditions: empty tooling, nominal filled vessel, worst-case filled vessel, and off-center or asymmetrical load. This is critical when moving reagent bottles, conical tubes, bioreactor samples, or centrifuge carriers where fluid slosh changes the dynamic moment during acceleration and braking.

Validate repeatability at application speed

A robot can appear highly accurate at reduced demonstration speed but behave differently at production cadence. For example, moving from 250 mm/s to 800 mm/s may alter settling time, induce vibration, or increase overshoot at the wrist. In pipetting support or cap-handling processes, that difference may affect vertical insertion depth, aspiration alignment, or septum engagement.

Where possible, request repeatability checks under at least 3 velocity profiles and 2 acceleration settings. A useful acceptance band for many lab tasks is not only positional repeatability, but also orientation stability, pause-to-pause settling, and low disturbance at the point of dispense or pickup.

Practical evaluation checklist

- Measure total end-of-arm mass and center-of-gravity distance from the flange.

- Map the full approach path to each device, not just the destination point.

- Simulate door openings, rack occupancy, and adjacent equipment interference.

- Test loaded motion over at least 100–300 repeated cycles for stability.

- Confirm recovery behavior after mispick, obstruction, or instrument timeout.

These five steps provide more decision value than comparing brochure payload and reach figures in isolation. They also reduce downstream redesign risk, which can add 2–6 weeks to a project when a robot base, gripper, or deck layout must be reworked after factory acceptance.

Check integration constraints early

In constrained labs, the robot is only one element of the system. Technical evaluators should check enclosure geometry, cleanability, cable routing, communication protocols, and utility access before approving a robot on specification alone. A model with adequate reach may still require an oversized pedestal, rear service clearance, or cable bend radius that conflicts with aisle spacing.

For fluidic-precision environments, integration concerns also include droplet control, airflow interactions, vibration transfer to balances, and compatibility with single-use or contamination-sensitive hardware. If the robot introduces micro-disturbances near weighing, microdispensing, or open-vessel transfer, productivity gains can be offset by quality losses.

A Better Benchmarking Framework for Procurement and Engineering Teams

A more reliable framework for robotic arm payload and reach benchmarks combines mechanical capability, process fit, and implementation risk. Rather than asking which robot has the biggest numbers, evaluators should ask which platform has the highest probability of stable operation across the intended 12–36 month application horizon.

The five benchmark dimensions that matter most

For technical assessment, five dimensions usually outperform spec-sheet comparison: dynamic load handling, accessible work envelope, loaded repeatability, integration compatibility, and recoverability. Each dimension should be scored against actual use cases such as vial transfer, reactor sampling, plate movement, centrifuge loading, or pipetting support.

The table below provides a practical scoring model that teams can adapt during RFQ review, FAT preparation, or side-by-side vendor comparison.

This framework helps procurement and engineering teams compare platforms on operational fitness rather than inflated headline appeal. It also creates a shared language between automation engineers, lab managers, and quality stakeholders during specification review.

Match the robot class to the lab task

Not every lab process needs a long-reach or high-payload robot. For many fluidic and sample logistics tasks, a smaller arm with lower inertia and tighter workspace control can outperform a larger unit. In a compact cell, a shorter arm may reduce swept volume, simplify guarding, and improve access consistency to plate hotels, balances, and analyzers.

Typical task categories can be split into at least 3 groups: micro-handling tasks under 1 kg, standard lab transfer tasks between 1 and 5 kg, and heavy auxiliary tasks above 5 kg. Each group benefits from a different tradeoff between reach, stiffness, speed, and footprint. A mismatch here can create avoidable capital cost and slower validation.

Where larger robots often underperform

- High-density benches where joint sweep creates unnecessary collision zones.

- Microplate or tube workflows where low disturbance matters more than raw lifting capacity.

- Clean or semi-contained environments where compact integration and easy wipe-down are priorities.

Common Procurement Mistakes and How to Avoid Them

Even experienced buying teams can overweight simple metrics because they are easy to compare across vendors. But in laboratory automation, simplified comparison can produce expensive blind spots. The most common procurement errors occur when robotic arm payload and reach benchmarks are separated from process mapping, validation planning, and service assumptions.

Mistake 1: selecting for future expansion without defining the future

Teams sometimes choose the largest robot “for flexibility,” yet never define the 2 or 3 likely future workflows. This can lead to overspecification, larger guarding, higher energy use, and more difficult integration. A better approach is to define expansion in measurable terms: additional stations, heavier containers, longer transport distance, or a second tool changer within a 12–24 month roadmap.

Mistake 2: ignoring wrist orientation and end-effector geometry

Reach to a target point is not enough if the wrist cannot present the gripper correctly. Tasks such as uncapping, angled rack access, and loading through instrument apertures depend on orientation freedom. In some cases, 5-axis versus 6-axis architecture, wrist offset, or flange rotation limits matter more than an extra 100 mm of radial reach.

Mistake 3: treating simulation as proof

Offline simulation is valuable, but it is not a substitute for loaded trials. Digital models may not fully reflect cable drag, fluid motion, grip compliance, or instrument door timing. Before final purchase, teams should request a representative demonstration using comparable tooling and at least one critical path from the real application.

Minimum evidence package before purchase approval

- A reach and interference study for the intended workcell layout.

- A loaded motion trial with realistic payload distribution.

- A repeatability check at target cycle speed.

- An integration review covering controls, safety, and maintenance access.

- A fault recovery demonstration for at least 2 routine upset scenarios.

For high-value pharmaceutical and chemical workflows, this evidence package can prevent late-stage engineering changes, delayed qualification, and hidden total cost of ownership that only emerges after commissioning.

What Technical Evaluators Should Ask Vendors Before Final Selection

Well-structured vendor questions expose whether quoted robotic arm payload and reach benchmarks are application-ready or merely catalog-ready. The objective is not to challenge the data sheet, but to reveal the conditions behind the numbers and the gap between nominal capability and operational reliability.

High-value questions for RFQ and technical review

- What payload remains available after adding the proposed gripper, utilities, and cable management package?

- How does repeatability change under the intended load and cycle speed?

- Which target positions in the workcell are near joint limits or orientation constraints?

- What settling time is required before a precise place, dispense, or pickup action?

- How many maintenance interventions are typical over 6 months in a multi-shift lab environment?

- Can the vendor support FAT, SAT, and process-specific tuning for regulated workflows?

These questions shift the discussion from broad capability to demonstrated fit. For organizations managing sensitive transitions from R&D to pilot or small-scale production, that shift is essential. It aligns automation choices with bioconsistency, fluidic precision, and controlled scale-up rather than simple equipment comparison.

Payload and reach are useful, but only when placed in context. In modern lab automation, the better benchmark is the one that predicts stable performance in the real workcell: with true tooling, true paths, true loads, and true quality constraints. For technical evaluators, that means moving beyond headline robotic arm payload and reach benchmarks toward a broader assessment of dynamic behavior, accessible envelope, loaded repeatability, and integration risk.

G-LSP supports this decision model by framing automation around measurable micro-efficiency, regulatory alignment, and practical deployment value across reactors, microfluidics, bioreactors, centrifugation, and liquid handling systems. If your team is comparing robotic platforms for precision-driven laboratory environments, contact us to discuss benchmark criteria, integration priorities, or a tailored evaluation framework. Get a customized solution and explore more fit-for-purpose lab automation strategies today.

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

Core Sector // 01

Security & Safety