Automated Dilution Factor Precision: What Causes Drift?

Author

Date Published

Reading Time

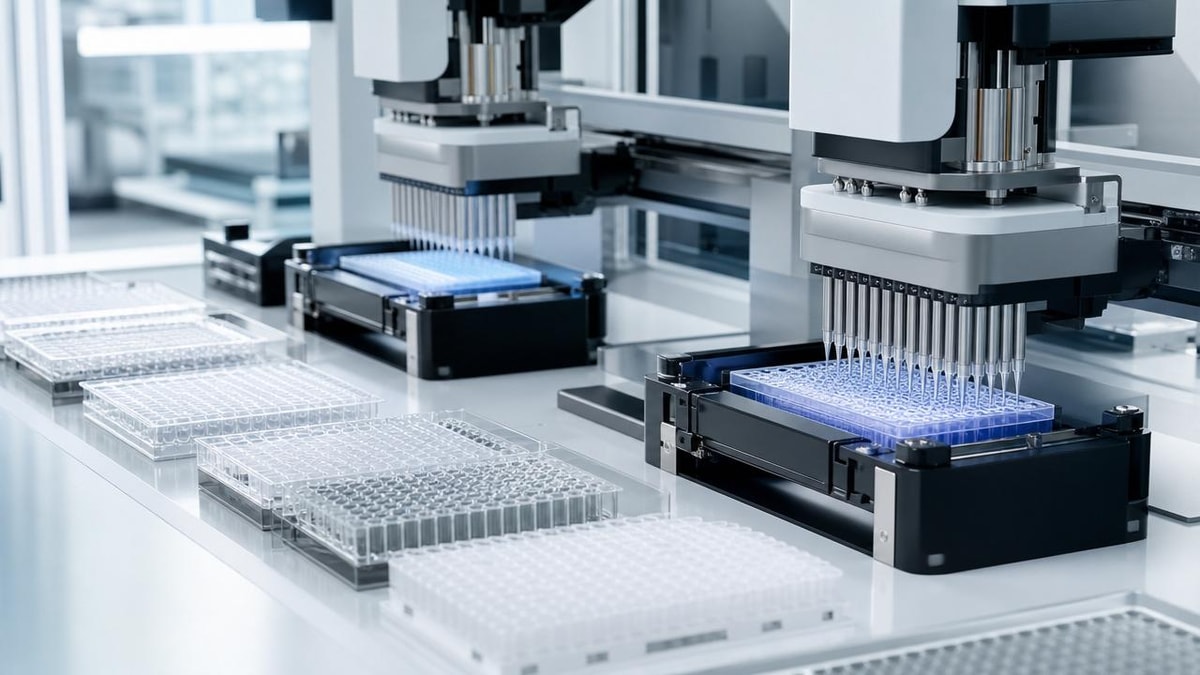

Why does automated dilution factor precision drift even in tightly controlled lab environments? For quality control and safety teams, small deviations can trigger out-of-spec results, compliance risks, and costly process instability. This article examines the technical, operational, and calibration-related causes behind dilution drift, helping decision-makers identify hidden failure points and strengthen consistency from method validation to routine production.

Why scenario differences matter more than theory alone

In practice, automated dilution factor precision rarely drifts for just one reason. The same liquid handling platform may perform flawlessly in assay preparation, then show measurable bias during viscous reagent transfer, low-volume serial dilution, or long unattended production runs. For quality control personnel and safety managers, this is why context matters: drift is not only a metrology problem, but a scenario-dependent control problem.

A pharmaceutical QC lab validating release assays will view automated dilution factor precision through the lens of repeatability, traceability, and audit readiness. A process safety team reviewing concentrated acid or solvent handling will focus more on exposure reduction, containment, and whether drift could distort hazard classification or reaction charge calculations. Procurement and engineering teams, meanwhile, need to know whether a system that looks accurate in a factory acceptance test will remain stable under their actual sample matrix, throughput, and cleaning routine.

That is why the most useful question is not simply, “What causes drift?” It is, “In which operational scenarios is drift most likely, what evidence reveals it, and what controls are appropriate for that use case?”

Where automated dilution factor precision problems usually appear

Across comprehensive lab and pilot environments, automated dilution factor precision issues tend to cluster around several high-impact scenarios. Each one creates different risk patterns for product quality, worker safety, and regulatory compliance.

1. Routine QC assay preparation

This is the most familiar scenario: standards, controls, and samples are diluted in defined ratios every day. Drift often remains hidden because operators trust the program recipe, yet repeated micro-errors in aspiration, dispense timing, or tip wetting can shift assay linearity over time. Here, automated dilution factor precision is directly tied to reportable data integrity.

2. Serial dilution for potency, toxicity, or microbiology workflows

Serial dilution amplifies any small inaccuracy from one step to the next. A minor volume deviation in the first transfer can become a major concentration error in later wells or tubes. In this setting, drift is cumulative, and quality teams should not rely solely on single-point volume verification.

3. Viscous, foaming, or volatile liquid handling

Buffers with surfactants, protein-rich media, solvents, and sticky reagents challenge aspiration physics. Bubbles, evaporation, retained film, and incomplete dispense can all distort the intended factor. For safety functions, volatile or corrosive materials add another layer: containment settings or slower transfer profiles may improve operator protection but alter throughput and precision if not tuned correctly.

4. Method transfer from development to production support

A dilution method validated on one platform may drift when moved to another site, another head configuration, or another environmental zone. This is a common source of hidden failure because teams assume digital method duplication equals equivalent fluidic behavior. It does not.

5. Long-run unattended operation

In high-throughput labs, automated systems may run for hours with minimal intervention. Thermal drift, mechanical wear, reservoir level changes, evaporation, or pressure instability can slowly shift automated dilution factor precision during the run. These deviations are easy to miss if verification is done only at startup.

Scenario comparison: what causes drift and what teams should check

The table below helps QC and safety stakeholders align drift investigation with actual operating conditions rather than generic troubleshooting.

The main technical causes behind automated dilution factor precision drift

Although application context shapes risk, several technical causes appear repeatedly across industries.

Calibration no longer reflects real operating conditions

A system may be “in calibration” yet still produce drift if calibration was performed with water-like liquids under ideal temperature and humidity, while actual production uses denser, more volatile, or more viscous fluids. Automated dilution factor precision depends on the relevance of calibration, not only the existence of a certificate. Labs should distinguish instrument calibration from process-representative performance qualification.

Liquid class settings are mismatched to the sample matrix

Aspiration speed, dispense speed, blowout volume, pre-wet cycles, settle time, and air gaps all affect transfer behavior. A default protocol that works for aqueous standards may underperform with protein formulations, solvents, or high-salt solutions. This is one of the most common and most underestimated sources of automated dilution factor precision drift.

Mechanical wear and consumable variability

Seals, syringes, valves, and pump heads wear gradually. Tips from different lots may vary in geometry, fit, or surface energy. Tubing fatigue can alter pressure response. These changes may remain invisible until trend analysis reveals a slow shift in recovery or replicate spread.

Environmental influences inside “controlled” labs

Controlled does not mean static. Day-night temperature variation, HVAC airflow, static electricity, vibration from adjacent equipment, and humidity changes can influence balance-based verification and low-volume fluid transfer. For volatile materials, even enclosure design can affect evaporation and therefore final concentration.

Software assumptions and workflow design flaws

Sometimes the hardware performs correctly, but the programmed dilution logic is wrong for the process. Examples include insufficient mix cycles, wrong dead-volume assumptions, incorrect deck mapping, and no compensation for hold times between aspiration and dispense. In audits, these failures are often misclassified as random operator error when they are actually method design issues.

How QC personnel and safety managers prioritize differently

Both groups care about automated dilution factor precision, but their decision criteria are not identical. Understanding this difference helps organizations build stronger cross-functional controls.

In mature operations, the best control strategy combines both views: concentration accuracy must be proven without creating unsafe manual interventions or uncontrolled rework. This is especially important in pharmaceutical, chemical, and advanced R&D environments where a drift event may affect not only one result, but also downstream release decisions, process interpretation, or hazard evaluation.

Scenario-based recommendations for preventing drift

For routine regulated QC labs

Use matrix-relevant verification, not just water-based gravimetric acceptance. Establish periodic concentration recovery checks using representative standards. Trend results by instrument, tip lot, operator, and time period. If automated dilution factor precision changes gradually, statistical drift signals usually appear before formal failure.

For serial dilution and bioassay applications

Validate each transfer stage, mixing efficiency, and carryover risk. Consider whether direct dilution from source is more robust than multi-step serial designs for critical assays. When the assay response is nonlinear, even subtle dilution drift can distort interpretation beyond what replicate precision suggests.

For hazardous or volatile liquid environments

Review the interaction between safety controls and fluidic behavior. Closed enclosures, slower movement, or delayed lid access may improve safety but also increase dwell time and evaporation exposure. Validate automated dilution factor precision under the same containment and timing conditions used in production, not in an open bench surrogate setup.

For scale-up support and multi-site deployment

Do not treat method files as universally transferable. Requalify on the receiving platform with local consumables, environmental conditions, and actual sample matrices. A strong benchmarking approach, such as the discipline promoted in fluidic-precision repositories and standards-based qualification programs, is valuable because it reveals whether equivalent settings produce equivalent outcomes across sites.

Common misjudgments that allow drift to persist

- Treating automated dilution factor precision as a one-time validation topic instead of a lifecycle control topic.

- Using water-based checks to represent all liquids.

- Ignoring the cumulative nature of serial dilution error.

- Assuming a compliant calibration schedule eliminates the need for runtime verification.

- Investigating only obvious failures while missing slow drift visible in trend data.

- Separating QC review from safety review, even when both are affected by the same fluid handling deviation.

FAQ: practical questions from quality and safety teams

How often should automated dilution factor precision be checked?

It depends on risk, matrix complexity, and run duration. High-impact regulated assays and hazardous chemical workflows typically justify startup checks plus periodic in-run or end-of-run verification.

Is calibration drift the same as dilution drift?

No. Calibration drift is one contributor, but automated dilution factor precision can also drift because of liquid properties, software logic, environmental variation, consumable changes, or poor mixing.

Which scenario deserves the highest caution?

Serial dilution with low volumes and difficult matrices is especially sensitive, because small inaccuracies compound quickly. Hazardous volatile liquids also deserve elevated caution because safety and analytical accuracy can fail together.

Turning scenario insight into a stronger control plan

The most effective response to automated dilution factor precision drift is not a generic maintenance reminder. It is a scenario-specific control plan that links liquid type, application criticality, verification frequency, containment needs, and method design assumptions. For QC teams, that means building trend-based evidence instead of relying on pass/fail snapshots. For safety managers, it means confirming that protective measures do not unintentionally alter dilution performance.

If your organization handles regulated assays, potent compounds, volatile solvents, or multi-site method transfer, the next step is to map your actual dilution scenarios and test them under realistic conditions. That is where hidden drift becomes visible—and where more reliable, compliant, and safer fluidic performance begins.

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

Core Sector // 01

Security & Safety