Microplate Processing Time Data That Impacts Daily Output

Author

Date Published

Reading Time

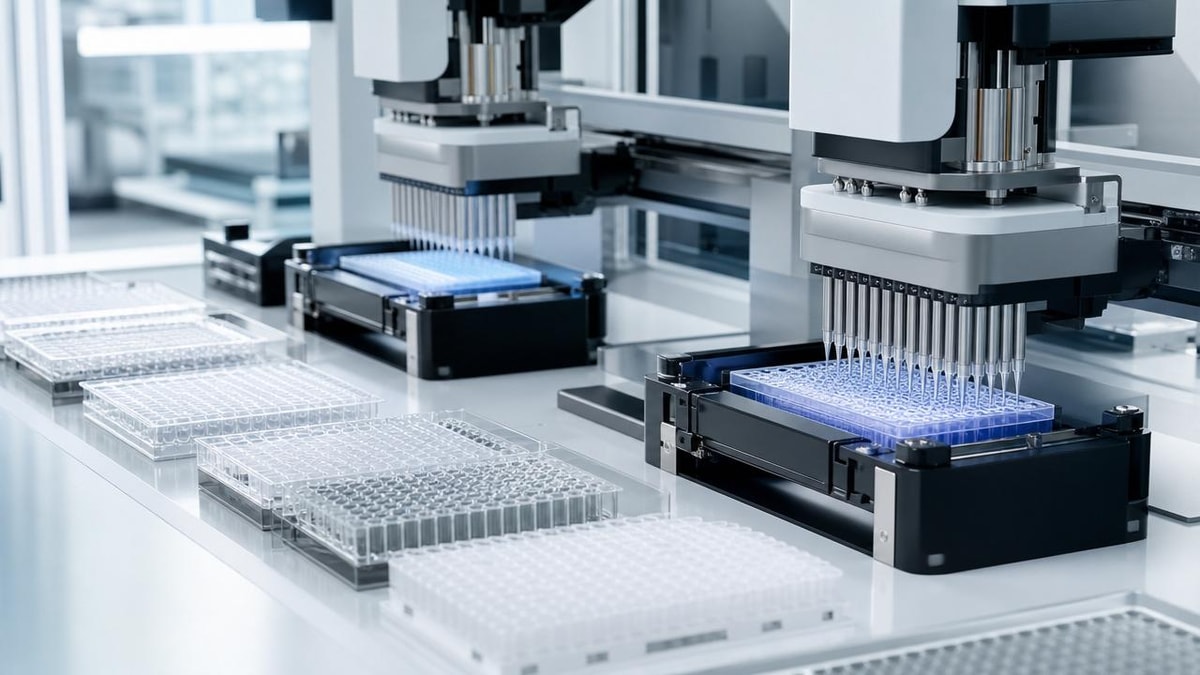

For lab operators under constant pressure to increase throughput, microplate processing time data is more than a metric—it directly shapes daily output, workflow stability, and error control. Understanding how handling speed, transfer accuracy, and equipment timing interact helps teams reduce bottlenecks, improve repeatability, and make smarter decisions about plate-based operations in demanding production and research environments.

In plate-based workflows, a difference of 8 to 20 seconds per plate can accumulate into a major daily capacity gap across 50, 100, or 300 runs. For operators in pharmaceutical development, chemical screening, QC testing, and fluidic precision environments, the practical value of microplate processing time data lies in how clearly it reveals hidden losses: waiting between steps, rework caused by transfer inconsistency, and underused automation capacity.

Within high-control B2B lab settings, especially those managing the transition from benchtop methods to scale-relevant production, timing data should not be treated as a standalone machine specification. It must be read together with liquid handling accuracy, deck movement logic, incubation or separation dependencies, and operator intervention frequency. This is where technical benchmarking becomes useful for users who need reliable output rather than theoretical speed claims.

Why Microplate Processing Time Data Directly Affects Daily Throughput

For operators, daily output is rarely limited by one dramatic failure. More often, it is reduced by repeated micro-delays across 3 to 7 workflow stages: plate loading, aspiration and dispense cycles, mixing, incubation transfer, sealing or unsealing, reading, and unloading. Accurate microplate processing time data allows teams to quantify these delays and decide whether the constraint is equipment speed, plate logistics, or manual handling.

The difference between rated speed and usable speed

A liquid handler may advertise high pipetting throughput, but usable throughput depends on the full cycle time per plate. If a system dispenses in 25 seconds but requires 40 more seconds for alignment, tip change, deck travel, and verification, the actual process time becomes 65 seconds. Over 120 plates per shift, that difference can add more than 80 minutes of hidden processing time.

Operators should therefore compare at least four timing layers: pure dispense time, total plate cycle time, batch start-to-finish time, and intervention time per 8-hour shift. This gives a more realistic view of whether a platform fits high-mix research work, repetitive assay production, or regulated QC routines.

Where time loss usually appears in plate operations

In many labs, the biggest losses are not in the dispensing head itself. They show up in dead time between linked systems, such as a reader waiting for a plate transporter, or an operator pausing a run to replace consumables after every 20 to 30 plates. Small interruptions can cut effective throughput by 10% to 25%, especially in workflows using 96-well, 384-well, and mixed-format plates on the same day.

- Deck travel and plate positioning delays

- Tip loading, washing, or replacement intervals

- Manual barcode confirmation and sample traceability checks

- Integration lag between pipetting, incubation, centrifugation, and reading steps

- Repeat transfers caused by viscosity, bubble formation, or low-volume error

The table below shows how common timing elements influence practical output in plate-based environments.

The key takeaway is that microplate processing time data becomes meaningful only when each timing element is measured at workflow level. A machine that appears 15% faster in a brochure may produce less daily output if it requires more interventions or longer setup between formats.

How Operators Should Read Time Data in Real Lab Conditions

Time data must reflect the actual conditions of use: sample viscosity, plate density, transfer volume, contamination control rules, and the number of connected devices. In multidisciplinary environments such as G-LSP benchmarked workflows, operators often work across liquid handling, microfluidic precision, centrifugation, and bioconsistent process hardware. That means the useful question is not “How fast is one unit?” but “How stable is the full workflow over 1 shift, 3 shifts, or a 5-day campaign?”

Measure three levels of timing, not one

A robust timing review should include at least three levels. First is step time, such as plate transfer or dispense duration. Second is cycle time, meaning the full time from one plate entering the station to the next plate being ready. Third is campaign time, covering the output of a complete run including pauses, consumable reloads, and restarts. Many output problems only become visible at the third level.

A practical timing review sequence

- Record the processing time of 10 consecutive plates under normal operating settings.

- Separate manual time from automated time.

- Repeat measurement for at least 2 plate formats or 2 transfer volumes if your lab uses both.

- Track error or rework events during the same run.

- Calculate output per hour and output per operator, not just per instrument.

This simple 5-step method helps users avoid a common mistake: selecting equipment based only on maximum speed under ideal conditions. In real operations, consistency across 30, 60, or 200 plates is usually more important than the fastest single cycle.

Format, volume, and precision all change the timing profile

Microplate processing time data changes significantly with plate format and fluidic demands. A 96-well routine fill at 50–200 µL per well does not behave like a 384-well assay with 1–10 µL transfers. Lower volumes typically require slower aspiration and dispense tuning to preserve precision, while viscous or cell-based materials may need additional mixing or settling time. In these cases, a “fast” program can increase retest rates and reduce true productivity.

For users handling sensitive biologics, personalized therapeutics, or formulation screening, the tradeoff between speed and repeatability should be documented in standard operating windows. A plate completed in 55 seconds is not more productive than one completed in 70 seconds if the faster method creates a 3% to 5% repeat rate.

Selecting Equipment and Workflow Configurations with Time Data in Mind

When comparing systems, operators and procurement teams should use microplate processing time data as one of several linked criteria. It should be evaluated alongside precision, compatibility, cleaning burden, software logic, and scalability. This is especially important in B2B environments where one platform may need to support R&D screening today and validation-heavy production support tomorrow.

What to ask before choosing a plate handling or liquid handling system

The right questions can prevent months of mismatch between user expectations and installed performance. A platform that suits pilot-scale development may not be ideal for repetitive QA/QC plate routines, while a high-throughput configuration may be oversized for lower-volume analytical tasks.

- What is the average full cycle time per plate under the target protocol?

- How many operator touches are required per 50 or 100 plates?

- How does timing change between 96-well and 384-well formats?

- What is the expected rework rate when running low-volume transfers below 5 µL?

- How long does cleaning, decontamination, or tip replenishment take?

- Can the system maintain timing stability during 4-hour to 8-hour continuous operation?

The following comparison table can help operators and buyers frame timing-related selection decisions more clearly.

The strongest selection decisions come from balancing speed with stability. For operators, a system that keeps cycle time within a narrow variation band—such as less than 5% drift over a shift—often creates better scheduling confidence than a system with higher peak speed but inconsistent batch behavior.

When benchmarking matters most

Benchmarking is especially valuable when labs are moving from manual or semi-automated plate processing to more integrated platforms. In these transitions, user teams need to compare not only output but also transfer consistency, workflow handoff time, and compatibility with ISO-, USP-, or GMP-aligned operational controls. A process that saves 90 minutes per day but increases review and deviation handling may not produce real operational gain.

Implementation, Risk Control, and Daily Optimization for Operators

Once equipment is installed, the next challenge is converting nominal capability into repeatable daily output. This requires operators to use microplate processing time data as a live management tool, not a one-time qualification record. Time trends should be reviewed weekly or monthly, especially after protocol edits, sample changes, or service events.

Three common mistakes that reduce throughput

The first mistake is optimizing only the dispensing step while ignoring upstream and downstream timing. The second is using one default method for all sample types, even when viscosity or foaming behavior differs. The third is failing to define an intervention threshold, such as maximum acceptable alarm frequency per 100 plates or acceptable delay per batch.

In practice, many labs benefit from setting clear control points. Examples include investigating any cycle time increase above 10%, reviewing any repeat transfer rate above 2%, and checking consumable stock planning whenever an operator touchpoint exceeds once every 25 to 40 plates. These simple thresholds improve stability without adding unnecessary complexity.

A workable optimization checklist for daily use

Operator-focused control actions

- Review average cycle time at the start and end of each shift.

- Separate waiting time from active processing time.

- Log every manual interruption above 60 seconds.

- Check whether low-volume programs require speed reduction for consistency.

- Confirm that linked devices such as readers, incubators, or centrifugation steps are not creating queue buildup.

- Retune or escalate service review if repeated drift appears over 3 consecutive runs.

These actions are particularly relevant in environments built around fluidic precision and micro-efficiency. When labs support sensitive transitions from R&D to production, stable timing supports more than throughput. It also supports traceability, predictable staffing, lower deviation burden, and better cross-team planning.

FAQ for users managing plate-based throughput

Is the fastest system always the best choice?

No. If higher speed increases rework, intervention frequency, or variability across long runs, daily output may fall instead of rise. Stable end-to-end cycle time is usually more valuable than peak speed.

How often should timing data be reviewed?

For high-use platforms, weekly review is a practical baseline. After process changes, maintenance, or sample matrix shifts, an immediate 10-plate to 20-plate verification run is advisable.

What is a reasonable output metric for operators?

A good metric combines plates per hour, interventions per shift, and repeat rate. Looking at only one number can hide real workflow losses.

Microplate processing time data has the most value when it is tied to real workflow behavior, not isolated instrument claims. For operators, it clarifies where minutes are lost, where precision tradeoffs begin, and which changes truly increase output across an 8-hour shift or a multi-day campaign. For teams benchmarking liquid handling, microfluidic precision, centrifugation, and bioconsistent lab infrastructure, accurate timing analysis supports better equipment selection, smoother implementation, and more reliable daily production.

If your operation needs clearer benchmarks for plate-based throughput, workflow stability, or scale-relevant lab performance, now is the right time to review your timing model in detail. Contact us to discuss your application, request a tailored evaluation framework, or learn more about precision-focused solutions for microplate handling and integrated lab execution.

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

Core Sector // 01

Security & Safety