Robotic Arm Repeatability Metrics Can Hide Real Variance

Author

Date Published

Reading Time

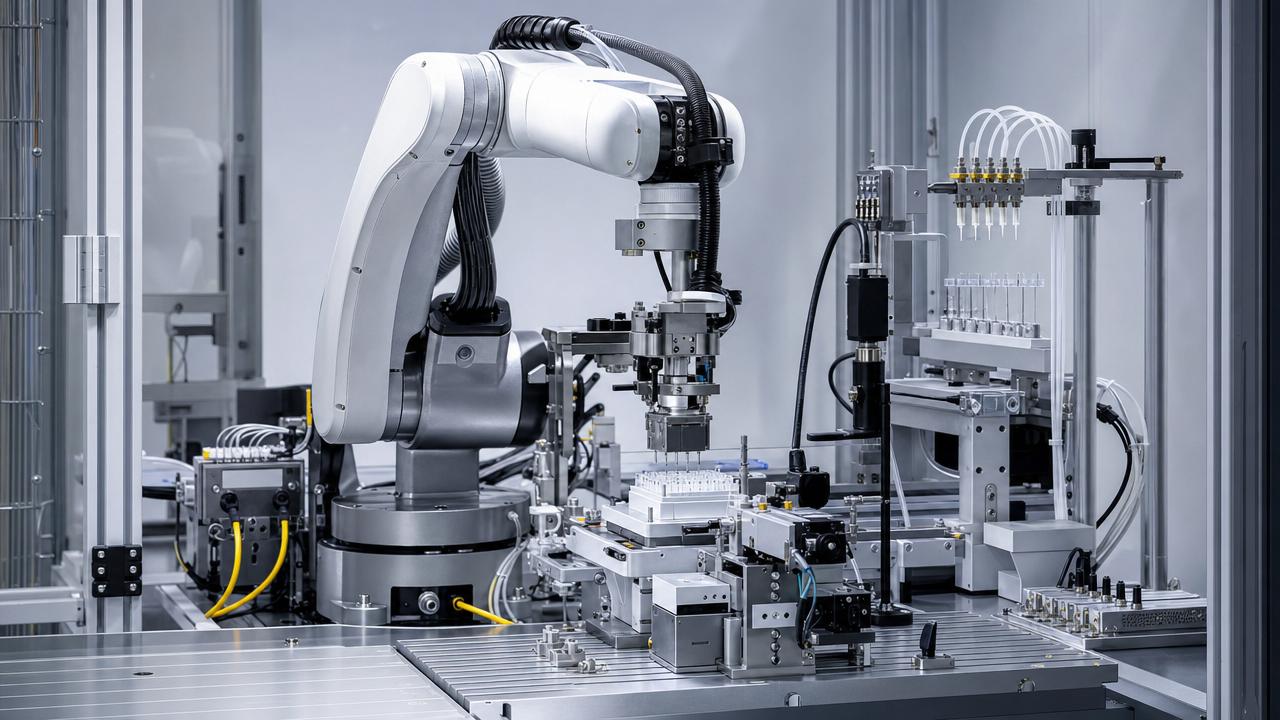

For technical evaluators comparing automation platforms, robotic arm repeatability metrics are useful—but rarely sufficient. A tight published repeatability value can signal good mechanical design, yet it does not reliably predict how a robot will behave under changing payloads, different end effectors, dynamic motion profiles, thermal drift, calibration age, or real process constraints. In practice, the gap between brochure repeatability and application stability is where many integration risks emerge.

For buyers and assessment teams in precision manufacturing, lab automation, fluid handling, and regulated production environments, the real question is not “What is the robot’s best repeatability number?” but “How much variance will the system introduce in my actual process?” That distinction matters when the process window is narrow, the tooling is sensitive, and downstream quality costs are high.

This article explains why robotic arm repeatability metrics can hide real variance, what technical evaluators should examine beyond the headline figure, and how to build a more reliable comparison framework for automation selection.

Why the published repeatability number is only a partial truth

Most vendors present repeatability as a single, attractive specification, often measured in millimeters under controlled test conditions. That number usually reflects the robot’s ability to return to the same taught point repeatedly, not its ability to hit an absolutely correct point across a full working envelope, across all orientations, or under every operating condition.

For technical evaluators, this distinction is essential. A robot may demonstrate excellent point return in a lab-standard test while still showing meaningful process variation once the payload changes, the wrist orientation shifts, acceleration increases, or the cycle path becomes more complex. In other words, the published metric may describe best-case consistency, not application-level consistency.

Repeatability also tends to compress multidimensional behavior into one value. It says little about how errors accumulate through joints, how motion degrades near reach limits, how compliance changes under torque, or how the robot behaves after long operating periods. In high-precision environments, those omitted variables can matter more than the headline specification itself.

What robotic arm repeatability metrics actually measure—and what they do not

To assess robotic arm repeatability metrics properly, evaluators must first understand the boundaries of the metric. Repeatability typically measures scatter around a target when the robot approaches the same point repeatedly under fixed conditions. It is a measure of consistency, not necessarily correctness, process capability, or outcome reliability.

What it usually does measure includes return-to-point variation, often in a standardized test setup, with a defined payload, path type, and measurement methodology. It can be a valuable proxy for mechanical precision and control stability. If the number is poor, that is a red flag. If the number is strong, however, it should be treated as a starting point rather than final proof.

What it often does not measure includes absolute positioning accuracy, path accuracy during continuous motion, variance across different speeds, variance after thermal soak, sensitivity to end-of-arm-tooling changes, and stability under application-specific forces. It also does not directly quantify process output variation, such as dispense consistency, placement quality, seal integrity, or interaction with delicate components.

For lab-scale production, bioprocess support, and fluidic-precision systems, this limitation is especially important. A robotic arm that repeats to a nominal point very well may still introduce unacceptable variability into pipetting alignment, vial handling, microreactor interfacing, or centrifuge loading if the process depends on dynamic motion, orientation-sensitive contact, or low-tolerance transfer steps.

Where real-world variance appears despite a strong specification

The most common evaluation mistake is assuming that a strong repeatability figure travels unchanged into production reality. In practice, variance enters through several mechanisms that may not be visible in a datasheet.

Payload change is one major source. A robot tested at a light or nominal load may behave differently with heavier grippers, tubing bundles, vision hardware, fluid manifolds, or changing center-of-gravity conditions. Even if the system stays within rated payload limits, structural deflection and servo compensation behavior can change enough to affect process quality.

Speed and acceleration are another source. Some applications require gentle point-to-point motion, while others demand high throughput with aggressive acceleration profiles. Higher dynamic forces can amplify vibration, overshoot, settling time issues, and tool-tip instability. A robot that looks excellent in slow validation routines may perform differently at production cycle rates.

Tooling variation is equally important. End effectors change effective stiffness, inertia, cable routing loads, and wrist torque. A vacuum gripper, a pipetting head, a torque tool, and a custom fluidic probe all impose different mechanical realities. The more application-specific the tooling, the less meaningful a generic repeatability number becomes on its own.

Environmental conditions also matter. Temperature shifts, floor vibration, enclosure airflow, nearby machinery, humidity effects on materials, and maintenance intervals can all change motion behavior. In facilities where precision dispensing, sample transfer, or sterile interface operations occur, subtle environmental influences can push a nominally capable robot outside a narrow process window.

Finally, variance often appears over time. Calibration drift, wear in transmission components, tool changes, collisions, and long-term thermal cycling may gradually alter system performance. A procurement decision based only on acceptance-day repeatability may underestimate lifecycle risk.

Why technical evaluators should distinguish repeatability, accuracy, and process capability

One of the clearest ways to avoid misinterpretation is to separate three questions: Can the robot return to the same point? Can it reach the correct point in space? And can it deliver a stable process result? These are related, but they are not interchangeable.

Repeatability addresses the first question. Absolute accuracy addresses the second. Process capability addresses the third. In many real applications, the third question is the most commercially important because quality outcomes, throughput stability, deviation rates, and rework costs all depend on process consistency rather than on a single motion metric.

For example, in automated liquid handling or vessel interfacing, a robot with moderate absolute accuracy but strong process compensation may outperform a robot with a better published repeatability number if the overall system manages alignment, vision correction, force control, and stable tool approach more effectively. The process result is what matters.

This is why technical evaluators should compare robotic arm repeatability metrics alongside path accuracy, settling performance, tool-center-point stability, drift over time, and application output data. The winning platform is not always the one with the smallest brochure figure. It is the one that controls relevant variance under actual operating conditions.

How hidden variance affects regulated and precision-sensitive workflows

In regulated sectors and high-value R&D environments, hidden motion variance is not just a technical inconvenience. It can become a compliance, yield, and reliability issue. When systems handle biologics, reactive chemistries, sterile consumables, or sub-microliter transfers, seemingly small deviations may have outsized downstream consequences.

Consider a robot transferring labware into a microfluidic docking station. A repeatability number alone does not reveal whether angular approach variation, vibration at stop, or slight tool sag under load could damage interfaces or create inconsistent seals. Likewise, in automated sample preparation, tiny differences in positional behavior may affect aspiration depth, tip engagement, liquid contact, or cross-contamination risk.

In pilot-scale and scale-up settings, hidden variance can also undermine comparability across batches, instruments, or sites. If robotic motion contributes to inconsistent handling forces, timing delays, or vessel interactions, process characterization becomes less clean. This makes root-cause analysis harder and can introduce uncertainty into validation efforts.

For procurement teams supporting GMP-oriented or quality-critical operations, that means the evaluation should connect motion metrics to failure modes. Ask not only whether the robot is precise, but whether its residual variance can influence process outcomes, documentation burden, preventive maintenance frequency, or operator intervention rates.

What to request from vendors beyond the headline specification

Technical evaluators can improve decision quality significantly by asking vendors for performance evidence that goes beyond standard repeatability claims. The goal is not to reject the metric, but to contextualize it.

First, request the test conditions behind the published figure. Ask about payload, reach position, path type, approach direction, speed, environmental conditions, sample size, and measurement method. A number without context has limited comparison value.

Second, ask for performance at multiple payloads and orientations. If your application uses custom end effectors, tubing, probes, or variable loads, request data that reflects those realities. A robot may perform differently near maximum reach, at wrist-extreme angles, or with off-axis loading.

Third, seek dynamic performance data rather than only static point tests. This can include path accuracy, overshoot, settling time, contour deviation, and stability at target production speeds. In many automation tasks, especially fluidic and lab workflows, the transition into and out of the target position matters as much as the final position itself.

Fourth, ask about drift and revalidation intervals. How does the robot perform after extended runtime? What recalibration practices are needed? What happens after tool changes or maintenance events? Long-term stability often has greater operational value than a narrowly optimized initial test result.

Fifth, request application-relevant demonstrations. If your process involves tube-to-port engagement, vial transport, pipetting deck access, centrifuge loading, or reactor sampling, ask for a representative test. A vendor willing to validate under realistic conditions usually reduces evaluation uncertainty.

A practical framework for comparing automation platforms

For technical assessment teams, the most effective comparison approach is a layered model. Start with robotic arm repeatability metrics as an initial screening criterion, but do not treat them as the decision endpoint. Instead, evaluate the platform across mechanical, dynamic, process, integration, and lifecycle dimensions.

At the mechanical level, examine repeatability, payload margin, reach envelope behavior, wrist stiffness, and mount stability. At the dynamic level, review speed-dependent variation, settling behavior, path fidelity, and vibration characteristics. At the process level, focus on output quality under realistic tasks.

At the integration level, assess how the robot interacts with vision systems, force sensing, tool changers, liquid handling devices, safety systems, and supervisory software. Compensation strategies can significantly influence real-world performance. A less impressive raw robot may become a better solution if the full automation stack manages variation more intelligently.

At the lifecycle level, consider serviceability, recalibration burden, spare parts support, software maintainability, and vendor transparency. A platform that starts strong but drifts unpredictably or requires frequent intervention may deliver poorer total value than a slightly less precise but more stable alternative.

A useful decision matrix should therefore include at least these categories: published repeatability, tested repeatability under your load, dynamic motion behavior, process output capability, environmental robustness, drift over time, integration fit, maintenance burden, and validation support. This gives evaluators a more decision-relevant picture than brochure metrics alone.

How to align the metric with actual application risk

The right interpretation of robotic arm repeatability metrics depends on the tolerance structure of the application. Not every process requires the same depth of scrutiny. If the task involves broad pick-and-place tolerances and little quality sensitivity, a standard repeatability figure may be adequate for early-stage selection.

But if the application includes tight clearances, contact-sensitive interfaces, low-volume dispensing, sterile manipulations, fragile consumables, or regulated traceability, then the evaluation standard should rise accordingly. The narrower the allowable process window, the less safe it is to rely on a single repeatability number.

A practical way to align evaluation effort with risk is to map robot motion variance to specific failure consequences. What happens if the arm approaches 0.2 mm off target? What if the wrist angle shifts slightly at speed? What if thermal drift accumulates over a shift? If the answer is damaged consumables, failed docking, inconsistent dispense geometry, or increased operator recovery, then application-specific testing is justified.

This risk-based approach is especially relevant in multidisciplinary environments where benchtop precision must scale toward industrial relevance. The closer the robot sits to a critical process interface, the more evaluators should care about real variance instead of nominal repeatability alone.

Conclusion: use repeatability as a filter, not a verdict

Robotic arm repeatability metrics remain important because they provide a useful baseline indicator of precision potential. However, they can hide real variance when removed from the conditions under which they were measured. For technical evaluators, the key is to treat the metric as one input in a broader assessment of motion behavior, process capability, and operational risk.

A strong repeatability specification should prompt better questions, not automatic confidence. How does the robot behave under your payload, your speed, your tooling, your environment, and your validation expectations? How much variance reaches the process itself? And what evidence shows that performance will remain stable over time?

When those questions are answered systematically, teams make better automation decisions—especially in precision-sensitive lab, bioprocess, chemical, and advanced manufacturing settings. The best platform is rarely the one with the most attractive standalone number. It is the one that controls meaningful variance where your process can least afford it.

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

Core Sector // 01

Security & Safety