When Automated Dilution Factor Precision Starts to Slip

Author

Date Published

Reading Time

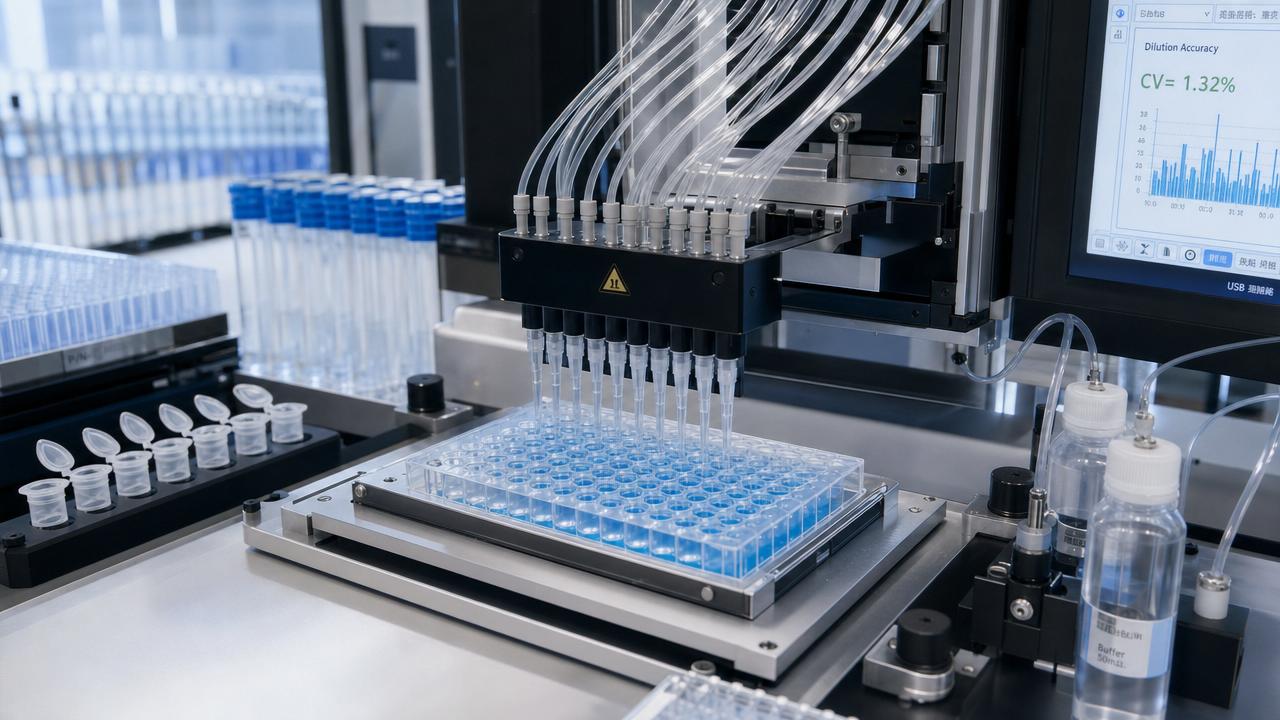

When automated dilution factor precision begins to drift, even routine workflows can produce inconsistent data, wasted reagents, and costly downstream errors. For operators working with sensitive assays, microfluidic systems, or automated liquid handling platforms, understanding the early signs of precision loss is essential. This article explores why automated dilution factor precision slips, what it means in daily lab operations, and how to restore reliable performance before quality and throughput are affected.

What does automated dilution factor precision actually mean in day-to-day lab work?

For operators, automated dilution factor precision is not just a theoretical specification on a vendor datasheet. It is the practical ability of an automated liquid handling system, pipetting platform, or microfluidic device to reproduce the intended dilution ratio again and again across multiple runs, users, and sample types. If a workflow calls for a 1:10 or 1:100 dilution, precision determines whether every well, tube, or channel receives nearly identical proportions of sample and diluent.

This matters because most analytical and production-support workflows depend on dilution integrity. In immunoassays, cell-based screening, qPCR setup, formulation development, and reaction optimization, even a small deviation in volume transfer can change signal intensity, concentration-response curves, or product quality interpretation. In high-throughput environments, poor automated dilution factor precision can silently amplify across hundreds of samples before anyone recognizes the root cause.

Operators often notice the problem indirectly. A standard curve becomes less linear. Replicates spread wider than expected. Controls remain inside limits one day and drift the next. Reagent consumption rises without a clear explanation. These symptoms may look like assay variability, but the underlying issue is often declining automated dilution factor precision.

Why does automated dilution factor precision start to slip even when the system still appears to be working?

This is one of the most common operator questions because a platform can still move liquid, complete runs, and pass a basic startup check while precision is already degrading. In many labs, the drift begins gradually rather than as a sudden failure. That makes it especially dangerous in regulated or high-value workflows.

The first cause is mechanical wear. Pipette seals, valves, plungers, syringes, and dispensing channels all change over time. Small changes in friction, elasticity, or alignment can alter aspirate and dispense consistency, particularly at low volumes. In microfluidic architectures, channel contamination or pressure instability can also shift the effective delivered ratio.

The second cause is liquid-property mismatch. Many automation methods are developed using water-like liquids, but production and research samples rarely behave that way. Viscous buffers, protein-rich solutions, solvents, surfactant-containing reagents, and volatile compounds all affect aspiration speed, droplet formation, retention, and mixing efficiency. When methods are not adjusted for fluid behavior, automated dilution factor precision suffers even if the hardware is technically functional.

The third cause is environmental variation. Temperature shifts can influence liquid density and viscosity. Evaporation becomes significant during long deck times or with small-volume transfers. Air bubbles caused by improper priming or inconsistent tip immersion can change delivered volumes. Even deck vibration or compressed-air instability may contribute in sensitive systems.

A fourth cause is software and workflow design. Incorrect liquid classes, unrealistic aspiration speeds, incomplete pre-wetting, poor mixing cycles, and poorly sequenced steps often create apparent hardware issues. In reality, the instrument is doing exactly what the method tells it to do, but the method is not robust enough for the application.

Which early warning signs should operators watch before precision loss becomes a bigger quality problem?

The most useful approach is to look for repeatable warning patterns rather than isolated anomalies. When automated dilution factor precision begins to decline, the first signs usually show up in trend data, operator observations, and subtle process inefficiencies.

Watch for increased replicate variability, especially in low-volume steps. If identical samples begin producing wider response ranges, dilution inconsistency should be investigated early. Also monitor whether standard curves require more frequent reruns, controls move closer to action limits, or assay recovery becomes less stable across shifts.

Visual clues are also important. Residual droplets on tips, inconsistent meniscus shape, delayed dispensing, foaming, splashing, or partial well wetting may indicate liquid handling instability. In micro-scale systems, pressure fluctuations, bubble formation, and unexplained dead-volume changes can point to the same underlying precision drift.

Operators should also pay attention to hidden operational signals: more failed plates, more manual interventions, more recalibration requests, or a rising dependence on repeat testing. None of these alone proves a precision issue, but together they strongly suggest that automated dilution factor precision is slipping.

Quick diagnostic table for common warning signs

Are some applications more vulnerable when automated dilution factor precision drops?

Yes, and this is where risk assessment becomes practical. Not every workflow reacts equally to precision drift. Some applications can tolerate a small variation without serious consequence, while others become unreliable very quickly. Operators should understand where their own process sits on that spectrum.

Low-volume workflows are especially sensitive. If the platform routinely dispenses sub-microliter or low-microliter volumes, a small absolute error becomes a large percentage error. Serial dilutions also magnify mistakes because each step depends on the previous one. A slight inaccuracy at the beginning can distort the entire concentration ladder.

Biological assays are another high-risk category. Cell health studies, enzyme assays, and biologic formulation testing may respond strongly to concentration shifts, mixing differences, or localized gradients. The same is true in pharmaceutical and chemical development settings where bench-scale screening data must support scale-up or procurement decisions. If automated dilution factor precision is poor, downstream conclusions may be misleading even when all other process steps seem controlled.

Continuous and semi-continuous fluidic workflows deserve special attention as well. In these environments, dilution is not always a single pipetting step but part of a tightly coupled flow architecture. Pressure balance, channel geometry, sensor feedback, and residence time all influence precision. A minor hardware or control shift can therefore affect both concentration accuracy and process stability.

How can operators tell whether the problem is hardware, method setup, or the liquid itself?

A structured troubleshooting sequence works better than replacing parts at random. Start by separating system-independent variables from system-dependent ones. If the same method performs well with a water-based verification fluid but poorly with the real sample matrix, the liquid properties or method settings are likely contributing factors. If the issue appears across fluids, hardware or calibration may be more suspect.

Next, compare channels, heads, or dispensing paths. If one channel consistently deviates, inspect seals, nozzles, tubing, alignment, or blockage. If all channels drift in a similar way, the root cause may be environmental, procedural, or software-related. Review aspiration depth, pre-wet steps, air gaps, dispense height, and mix protocols. Small method assumptions often produce large dilution effects.

It is also valuable to perform gravimetric or colorimetric verification at representative volumes rather than only at nominal calibration points. Many systems perform acceptably at mid-range volumes but lose automated dilution factor precision near the lower end of their operational range. Testing under actual use conditions gives more realistic insight than relying on factory benchmarks alone.

For advanced labs, linking trend logs with maintenance history can reveal patterns. Precision may degrade after a certain number of cycles, after specific solvent exposure, or during seasonal humidity changes. This kind of evidence helps operators move from reactive troubleshooting to predictive control.

What are the most common operator mistakes that make automated dilution factor precision worse?

One frequent mistake is assuming that calibration alone guarantees good performance. Calibration is necessary, but it does not replace application-specific method optimization. A system can be calibrated and still perform poorly with foaming media, volatile solvents, or narrow-volume serial dilutions.

Another mistake is using a single liquid handling profile for every reagent. Operators under time pressure may reuse standard settings across buffers, samples, and diluents with very different physical characteristics. This saves setup time initially but often reduces automated dilution factor precision and increases rework later.

Skipping preventive maintenance is also costly. Worn consumables, aging seals, contamination buildup, and misaligned components rarely fix themselves. Because the system still appears operational, teams may postpone service until failure is obvious. By then, significant data quality damage may already have occurred.

Finally, many teams underestimate operator-to-operator variation. Inconsistent tip loading, deck setup, priming discipline, reagent equilibration, and pause timing can all influence results. Standard work instructions should clearly define these steps if consistent automated dilution factor precision is expected across shifts.

What practical steps help restore and maintain reliable automated dilution factor precision?

The fastest improvement usually comes from combining technical verification with workflow discipline. Begin with a targeted performance check using the actual volume range, reagent class, and dilution pattern used in production or routine analysis. Do not rely only on generic acceptance tests. Real-use verification identifies whether the problem is isolated or systemic.

Then review method parameters in detail. Adjust aspiration and dispense speeds to match fluid behavior. Add pre-wetting where appropriate. Reconsider air gaps, blowout settings, mixing repetitions, and dwell times. If evaporation is a factor, reduce exposure time or redesign the sequence to shorten open-deck delays. In fluidic platforms, confirm stable pressure control and clean flow paths.

Preventive maintenance should be tied to actual usage and fluid exposure, not just calendar dates. High-cycle systems, aggressive chemistries, and biologically fouling media require more frequent inspection. Documenting trend shifts alongside maintenance actions creates a stronger reliability program over time.

Training is equally important. Operators need to understand not only which buttons to press, but why certain handling rules exist. When users recognize how bubbles, immersion depth, viscosity, and environmental conditions affect automated dilution factor precision, they are better equipped to prevent repeat problems.

Recommended operator checklist before escalating the issue

When should a team stop adjusting the method and start evaluating the platform itself?

If repeated optimization fails to stabilize automated dilution factor precision, the question may no longer be procedural. Some platforms are simply not well matched to certain throughput levels, fluid properties, compliance expectations, or precision targets. This is especially true when workflows evolve from exploratory bench work to highly repeatable development or pre-production environments.

Teams should consider a deeper platform evaluation when they see chronic reruns, narrow process windows, frequent maintenance interruptions, or poor reproducibility under real-use conditions despite trained operators and validated methods. In that case, hardware architecture, sensor feedback quality, wetted-material compatibility, and regulatory documentation support become procurement-level concerns rather than operator-level fixes.

For organizations moving between lab-scale development and industrial execution, benchmark-driven review is especially useful. Comparing precision stability, fluidic consistency, serviceability, and application fit across systems helps prevent buying for headline speed while overlooking the operational importance of dilution reliability.

What should you clarify first if you need support, validation, or a new solution?

Before requesting service, procurement input, or a new automation assessment, organize the problem around a few clear questions. What dilution ratios are failing most often? At what volume range? With which sample matrices? Is the issue channel-specific, method-specific, or universal? How does performance compare with verification fluids versus actual reagents? What trend data exists before and after maintenance?

These details make conversations with engineering teams, application specialists, or suppliers far more productive. They also reduce the risk of treating symptoms instead of causes. For operators and lab managers, the goal is not merely to correct one failed run. It is to restore durable automated dilution factor precision that supports reliable data, controlled reagent use, and confident scale-up decisions.

If you need to confirm a specific solution, parameters, evaluation path, implementation timeline, quotation, or collaboration model, start by discussing your actual fluid type, target dilution range, required repeatability, maintenance history, and throughput demands. Those are the questions that most quickly separate a temporary adjustment from a truly robust long-term answer.

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

Core Sector // 01

Security & Safety